[DO NOT MERGE] detect model test2 for dynamic shape#18372

[DO NOT MERGE] detect model test2 for dynamic shape#18372luotao1 wants to merge 36 commits intoPaddlePaddle:developfrom luotao1:detect_model_test

Conversation

test=develop

2. Few fix in concat/pool mkldnn kernel for key generation 3. Enable cache clearing mechanism test=develop

test=develop

|

634d8c6 is used to speedup |

test=develop

…m/pawelpiotrowicz/Paddle into detect_model_test

| } // namespace inference | ||

| } // namespace paddle | ||

|

|

||

| // following lines are used for pprof |

There was a problem hiding this comment.

You can remove followings main function if you do not want to use pprof.

|

6d5a841 support

|

|

pprof_sample100.pdf Same problem in #15032 (comment) |

|

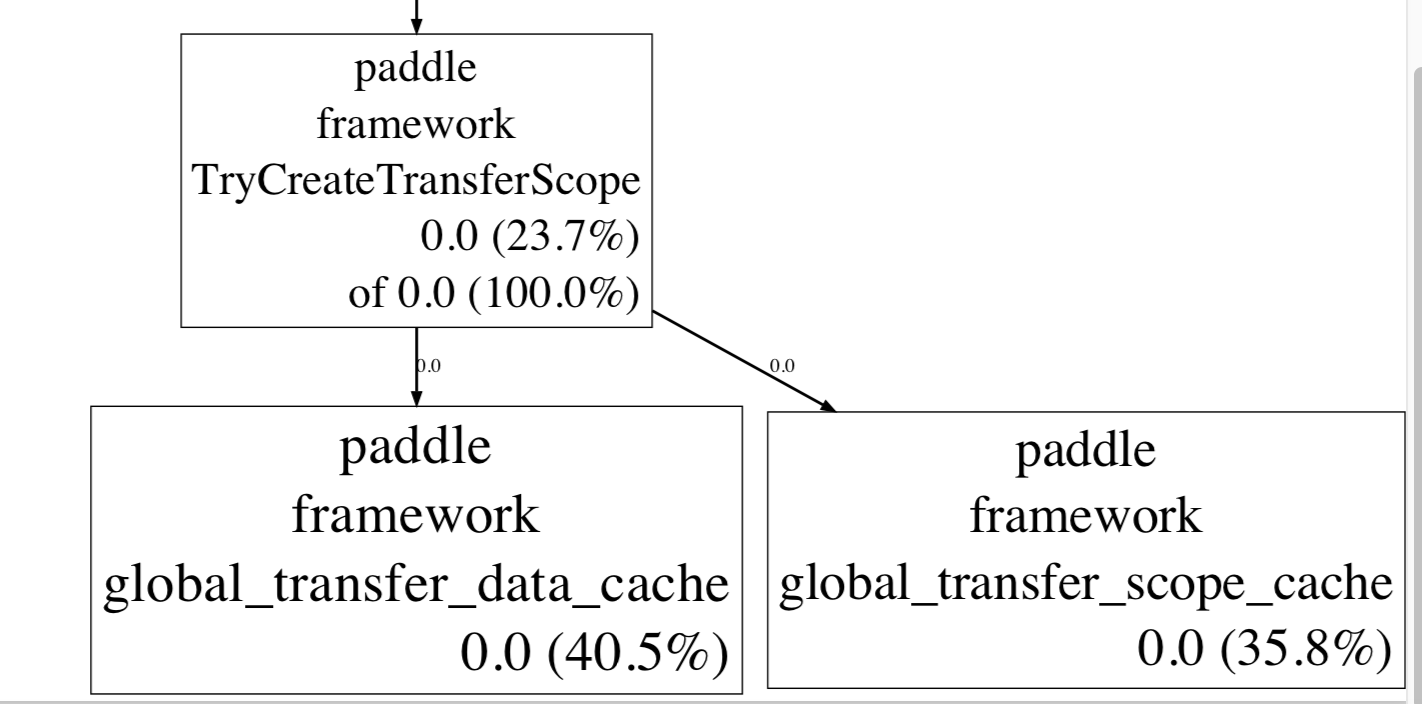

mkldnn多线程(每个iteration都用新的线程执行)memory leak的分析:

解决方案讨论: |

…uent switching case to avoid memory leak test=develop

… into detect_model_test

|

@luotao1 , I'm writting here because I have a problem reproducing potential memory leak in single-threaded execution that I was told by @LeoZhao-Intel you are seeing eg. I tried to reproduce this problem, but got difficulty in observing it. So perhaps you can advise on how to have it manifested. I tested this PR (most recent update one and earlier ones) and for CAP=50 (cfg.EnableMKLDNN(50) ) for

I have disabled in-loop threads starting to do prediction, by commenting out thread.emplace_back...

On my side I check Maximal memory consumption by checking Max RSS value as reported by time program eg./usr/bin/time -v <cmd line> typical output: Command being timed: "./paddle/fluid/inference/tests/api/test_analyzer_detect --infer_model=/home/jczaja/DETECT/fluid/ --infer_data=/home/jczaja/DETECT/detect_input.txt --infer_shape=/home/jczaja/DETECT/shape.txt --gtest_filter=Analyzer_vis.profile_mkldnn --paddle_num_threads=1 --repeat=2 --batch_size=1 --sample=5000"

|

|

@jczaja Thanks for your reproducing.

This is a result from @jianhang-liu. And my test result today is:

I don't disable this, but with #18428, I think the multi-instance memory leak is fixed now.

I use

I use

Yes, it is.

I use E5-2620 v3.

Maybe |

|

command: 2620 v3

|

|

close due to #24336 |

combine #18331 and #18285

@jianhang-liu @LeoZhao-Intel