Conversation

test=develop

test=develop

| : PassStrategy(other.AllPasses()) {} | ||

| : PassStrategy(other.AllPasses()) { | ||

| use_gpu_ = other.use_gpu_; | ||

| use_mkldnn_ = other.use_mkldnn_; |

There was a problem hiding this comment.

It don't need use_mkldnn_quantizer_ = other.use_mkldnn_quantizer_?

There was a problem hiding this comment.

Ah, true, I have missed that one.

Done.

test=develop

test=develop

| "conv_relu_mkldnn_fuse_pass", // | ||

| "conv_elementwise_add_mkldnn_fuse_pass"})) { | ||

| "conv_elementwise_add_mkldnn_fuse_pass", | ||

| "conv_relu_mkldnn_fuse_pass"})) { |

There was a problem hiding this comment.

why do you change the order here?

There was a problem hiding this comment.

Because it was in the wrong order. It was overlooked in the previous fix of mkldnn passes.

There was a problem hiding this comment.

Could you please illustrate an example? Thanks.

There was a problem hiding this comment.

How about move the implementation of EnableMKLDNN and EnableMkldnnQuantizer to paddle_pass_builder.cc?

There was a problem hiding this comment.

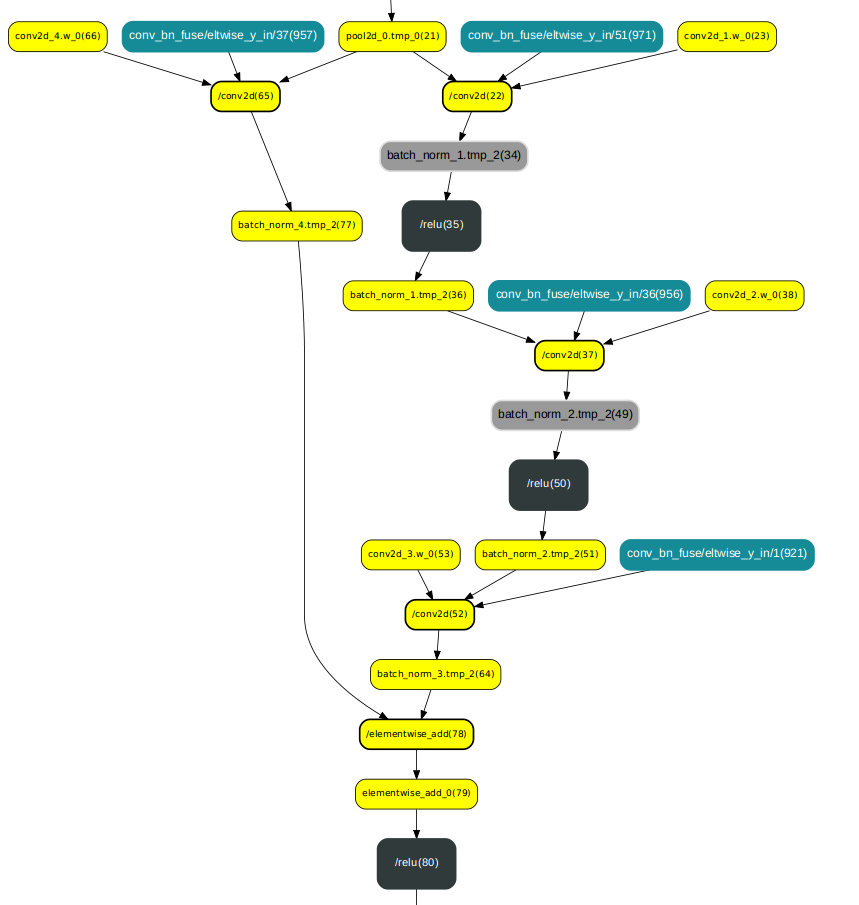

Sure. With ResNet50, after conv_bias_mkldnn_pass there picture is as follows:

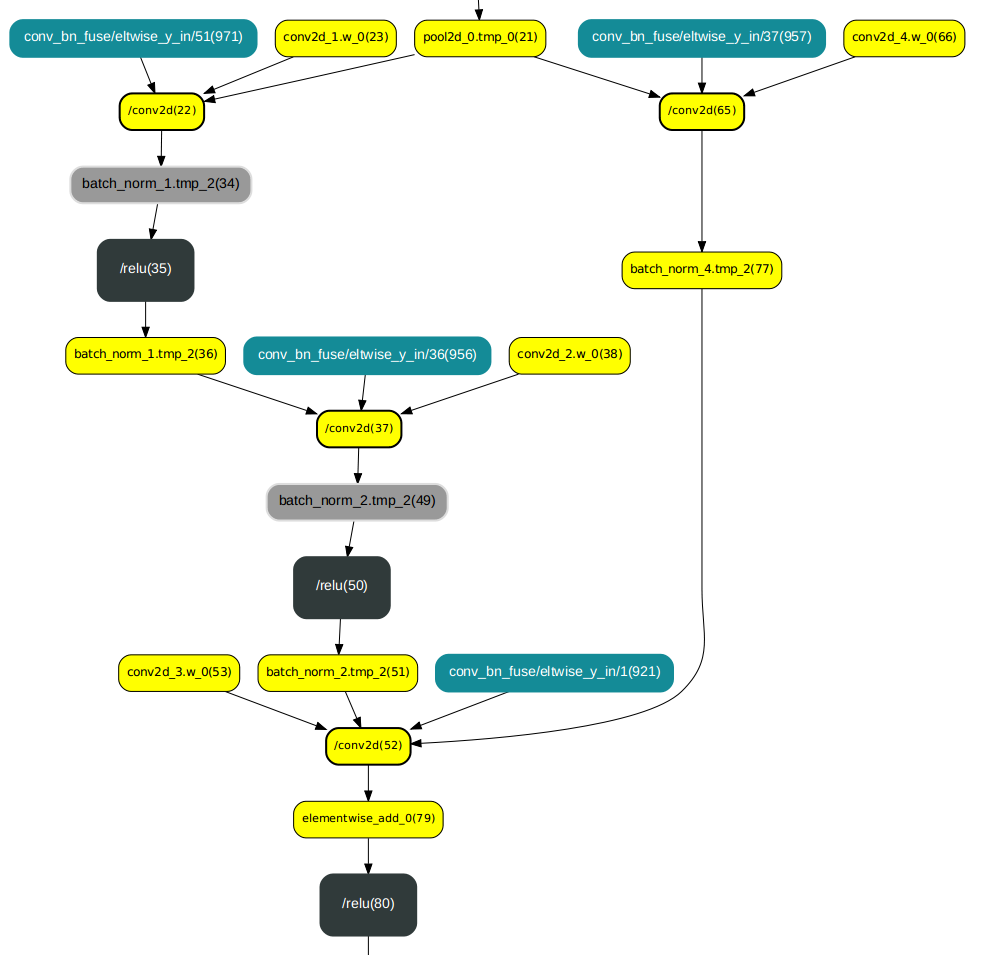

Then the conv_elementwise_add_mkldnn pass has to be applied:

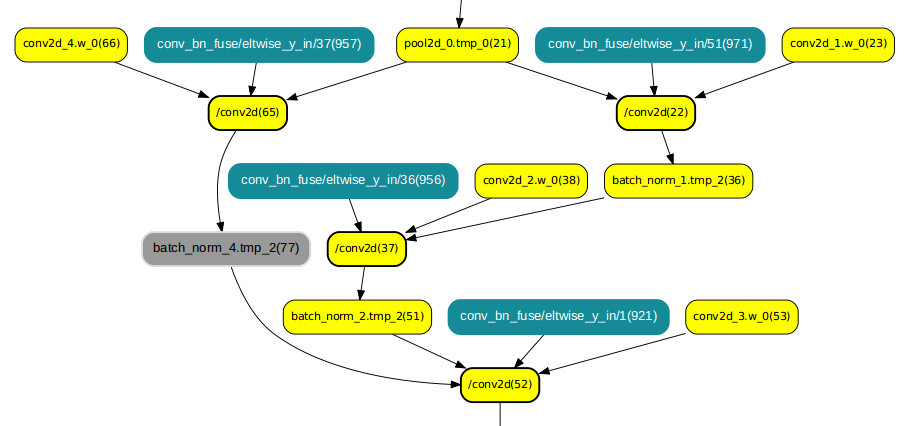

and then conv_relu_mkldnn_fuse_pass:

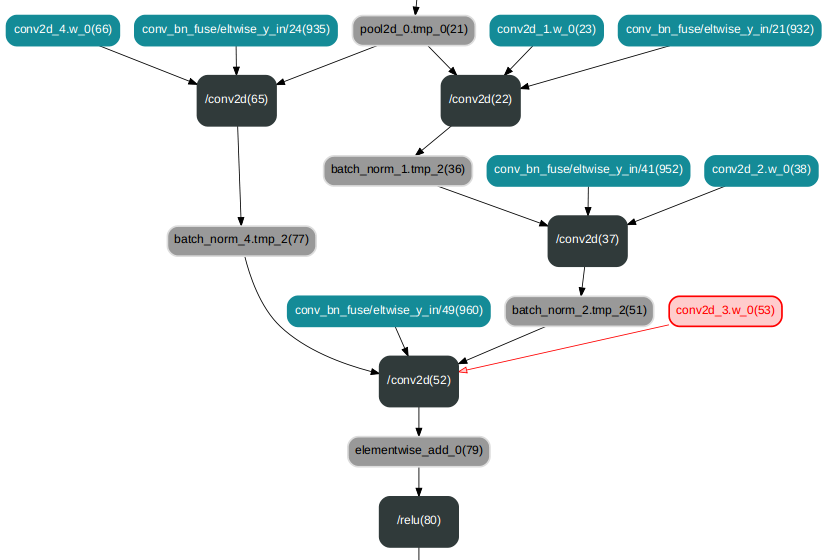

In the opposite order we would get remaining relu operators:

It was unnoticable until now, as some passes (conv_relu_mkldnn_fuse_pass among them) were unintentionally repeated. Eliminating arbitrary repetitions, the problem becomes visible, and this patch fixes it.

There was a problem hiding this comment.

How about move the implementation of

EnableMKLDNNandEnableMkldnnQuantizertopaddle_pass_builder.cc?

I do not think it can be done in an elegant and user-friendly way. A user has to have an option to enable MKL-DNN and MkldnnQuantizer, so AnalysisConfig is the best place to have the methods in. Implementation of the methods is minimal.

There was a problem hiding this comment.

I mean could you move line 122-134 from paddle_pass_builder.h to paddle_pass_builder.cc?

| << "EnableMKLDNN() only works when IR optimization is enabled."; | ||

| } else { | ||

| pass_builder()->EnableMKLDNN(); | ||

| } |

There was a problem hiding this comment.

Could we remove line 244-247? Since I see LOG(ERROR) << "Please compile with MKLDNN first to use MKLDNN";use_mkldnn_ = false; is already in pass_builder()->EnableMKLDNN();

Could we remove line 258-261 for the same reason?

| "conv_relu_mkldnn_fuse_pass", // | ||

| "conv_elementwise_add_mkldnn_fuse_pass"})) { | ||

| "conv_elementwise_add_mkldnn_fuse_pass", | ||

| "conv_relu_mkldnn_fuse_pass"})) { |

There was a problem hiding this comment.

I mean could you move line 122-134 from paddle_pass_builder.h to paddle_pass_builder.cc?

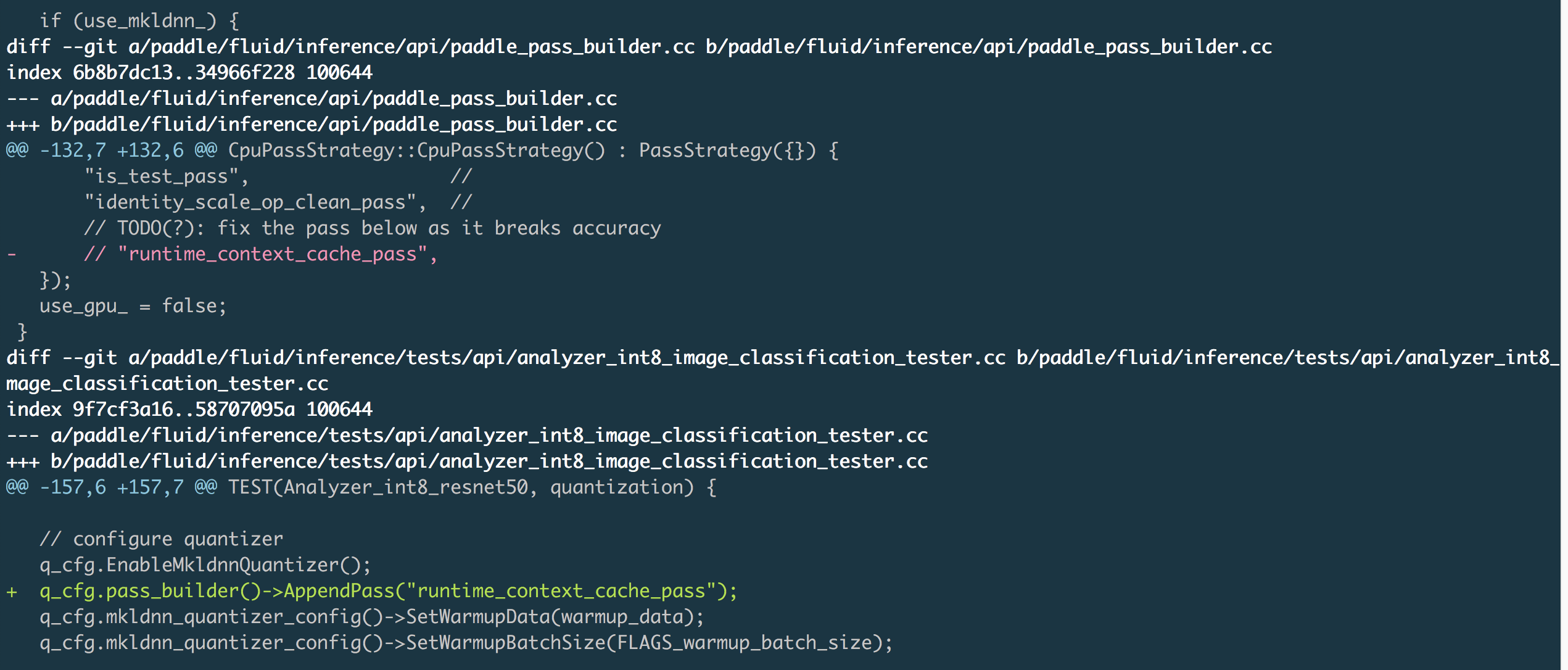

| "identity_scale_op_clean_pass", // | ||

| "runtime_context_cache_pass", // | ||

| // TODO(?): fix the pass below as it breaks accuracy | ||

| // "runtime_context_cache_pass", |

There was a problem hiding this comment.

Could you not delete runtime_context_cache_pass here? I will try to fix this problem in another PR. And your PR could be merged at first.

|

Do you want us to squash and merge this PR when it is all green? |

|

I squash and merge the PR, and you can do cherry-pick now. |

This patch fixes redundant adding passes multiple times.

test=develop