fix dataset reading and add support for full dataset#16559

fix dataset reading and add support for full dataset#16559luotao1 merged 13 commits intoPaddlePaddle:developfrom

Conversation

test=develop

… wojtuss/fix-int8-test

test=develop

| "depthwise_conv_mkldnn_pass", "conv_bn_fuse_pass", | ||

| "conv_eltwiseadd_bn_fuse_pass", "conv_bias_mkldnn_fuse_pass", | ||

| "conv_elementwise_add_mkldnn_fuse_pass", "conv_relu_mkldnn_fuse_pass", | ||

| "fc_fuse_pass", "is_test_pass"}); |

There was a problem hiding this comment.

Fix SetPass will in another PR?

There was a problem hiding this comment.

@luotao1 , it turned out that no special fix is required for this and the call to SetPasses() can be simply removed now. Some other modifications must have fixed the accuracy problem. Of course, repeated passes problem would still benefit from a cleanup, but it is not critical here. We will prepare a PR with a cleanup later.

There was a problem hiding this comment.

We will prepare a PR with a cleanup later.

Got it. Does new PR fix #16559 (comment) as well?

There was a problem hiding this comment.

I will adjust the fps calculation and remove redundant PredictionRun method in this PR.

WIP

| @@ -148,7 +151,7 @@ inference_analysis_api_test_with_fake_data(test_analyzer_mobilenet_depthwise_con | |||

| if(WITH_MKLDNN) | |||

| set(INT8_DATA_DIR "${INFERENCE_DEMO_INSTALL_DIR}/int8") | |||

There was a problem hiding this comment.

please change the INT8_DATA_DIR if you change the data. Otherwise, since INT8_DATA_DIR is already existed ON CI, it will be error.

There was a problem hiding this comment.

With this patch, we keep the data.bin file name but change the archive name into imagenet_val_100_tail.tar.gz, so there should be no conflict of archive names, or might there be a conflict of data.bin between several builds?

There was a problem hiding this comment.

if INT8_DATA_DIR is already exised ON CI, it will not download imagenet_val_100_tail.tar.gz

There was a problem hiding this comment.

do you mean that several CI builds share the same directory with the dataset?

There was a problem hiding this comment.

Our CI cache the INFERENCE_DEMO_INSTALL_DIR for speedup.

There was a problem hiding this comment.

set(INT8_DATA_DIR "${INFERENCE_DEMO_INSTALL_DIR}/int8v2") or other name

| DEFINE_int32(iterations, 0, "number of batches to process"); // setting to 0, | ||

| // means process | ||

| // the whole | ||

| // dataset |

There was a problem hiding this comment.

comment from 46-49 should be in one line.

| } | ||

| } | ||

|

|

||

| // With support for multiple batches (multiple outputs) |

There was a problem hiding this comment.

I wonder why do you rewrite this again, could you enhance line331predictor->Run(inputs[i], outputs, batch_size); to predictor->Run(inputs[i], &(*outputs)[i], FLAGS_batch_size);

There was a problem hiding this comment.

The original PredictionRun method discards outputs of all but the last iteration and outputs is of type std::vector<PaddleTensor> *. To calculate average accuracy over all iterations we have to keep all the output data and have outputs of type std::vector<std::vector<PaddleTensor>> *. I could modify just the original function, but it would require updates also in several other test files. I thought it would be refactored later, but I will do it here if you wish.

There was a problem hiding this comment.

It's better to refactor here, thanks very much!

There was a problem hiding this comment.

@luotao1 , I realized that refactoring would require modification of the latency calculation formula. This could influence some latency statistics of other tests. I am not sure whether these statistics are being gathered after running the tests and whether changing them could break anything beyond the tests. I cannot verify that quickly enough so I left the refactoring for later.

There was a problem hiding this comment.

I wonder why refactoring requires modification of the latency calculation formula?

- Yours:

auto latency = elapsed_time / (iterations * num_times * FLAGS_batch_size);

PrintTime(FLAGS_batch_size, num_times, FLAGS_paddle_num_threads, 0, latency,

1);

- Ours:

PrintTime(batch_size, num_times, num_threads, tid, elapsed_time / num_times,

inputs.size());

Why you don't use ours?

There was a problem hiding this comment.

With the current implementation fps value would be incorrect. It is 1/latency, so latency is related to a single frame, not a batch. The current formula does not handle that correctly.

There was a problem hiding this comment.

If current implementation fps value would be incorrect, you can correct it directly.

test=develop

test=develop

paddle/fluid/inference/api/helper.h

Outdated

| << "ms ======"; | ||

| } | ||

| LOG(INFO) << "====== batch_size: " << batch_size << ", iterations: " << epoch | ||

| << ", repetitons: " << repeat << " ======"; |

| LOG(INFO) << "FP32 & INT8 prediction run: batch_size " << FLAGS_batch_size | ||

| << ", warmup batch size " << FLAGS_warmup_batch_size << "."; | ||

| PrintConfig(reinterpret_cast<const PaddlePredictor::Config *>(qconfig), true); | ||

| PrintConfig(reinterpret_cast<const PaddlePredictor::Config *>(config), true); |

There was a problem hiding this comment.

Since line502 is the same as line503, how about move line503 after line507?

|

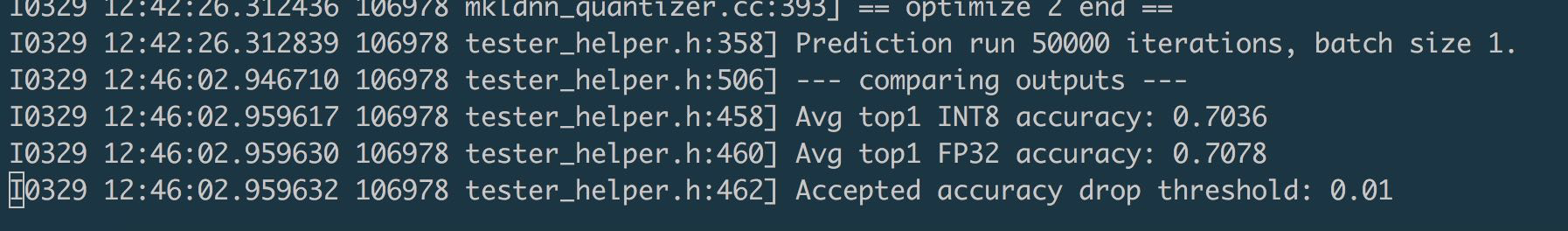

I test this PR on 6271 CPU with 50000 images generated by danqing. log: It seems the thoughput of FP32 is 75 and INT8 is 232? And the accuracy larger than 0.7? |

|

Besides, could you fix the compiler warning |

As for accuracy, it is for 100 images only, so it may be larger than 0.7. By default there is only one iteration of batch size 100, without warmup phase. The latency is not precise then. We assumed the precise latency and accuracy will be measured on the whole dataset, then the warmup is negligible. As for the accuracy for FP32, that was the problem with the passes I had on Friday. Today I have built it up from scratch and it worked fine. |

What is scratch? |

A separate PR is better. Besides, the thoughput of FP32 is 75 and INT8 is 232, which is different with V1 in |

|

"From scratch" means starting from clean directory. Oh, I have just noticed you have run this on the full dataset. Yes, with this test we get the following accuracy on the whole dataset: For throughput, you have to set larger batch_size. |

I clean the directory, and must add |

|

Then I am sending a new PR with the fix for passes. With it, the |

| std::vector<std::vector<PaddleTensor>> analysis_outputs; | ||

| std::vector<std::vector<PaddleTensor>> quantized_outputs; | ||

| LOG(INFO) << "--- FP32 prediction start ---"; | ||

| TestAnalysisPrediction(config, inputs, &analysis_outputs, true); |

There was a problem hiding this comment.

Why add TestAnalysisPrediction? Could you use TestOneThreadPrediction directly? Then you can remove TestAnalysisPrediction and line502-503

There was a problem hiding this comment.

To me, it seems cleaner this way for both AnalysisConfigs, less pointer casting. I can change it if you like.

There was a problem hiding this comment.

please change it. Thanks very much!

There was a problem hiding this comment.

I have the fix for repeated passes ready and could add the commit with the fix to this PR, or submit as a separate PR. What do you think?

A separate PR is better.

Submitted #16606

Could you please verify that this fixes the issue in the test on your machine?

| std::vector<std::vector<PaddleTensor>> analysis_outputs; | ||

| std::vector<std::vector<PaddleTensor>> quantized_outputs; | ||

| LOG(INFO) << "--- FP32 prediction start ---"; | ||

| TestAnalysisPrediction(config, inputs, &analysis_outputs, true); |

There was a problem hiding this comment.

To me, it seems cleaner this way for both AnalysisConfigs, less pointer casting. I can change it if you like.

test=develop

test=develop

test=develop

|

@luotao1 , when comparing latency, keep in mind that here the latency does not include loading data as the inference starts when the whole dataset is loaded into memory |

69cdebb to

46aaa36

Compare

|

@luotao1 , I have added a comment regarding accuracy drop for FP32 inference: #16606 (comment) |

… wojtuss/fix-int8-test

test=develop

test=develop

|

The resnet50 result: |

I will investigate it. Please solve the conflict and use |

Done. |

|

PR_CI fails. Maybe an error caused by #16584 . You can change like |

|

Yes, that helped. Thank you. |

In this patch, we fix a bug in reading dataset and add support for reading a whole imagenet dataset preprocessed using the tool from #16529.

Most changes come from the diff between #16532 and merged #16399.

Additional methods are added to

tester_helper.h. Could be refactored to reuse existing methods but would require more changes in other tests - left to further refactoring.test=develop