The open benchmark where AI learns to fly.

Train a neural network to navigate drones through 3D worlds it has never seen —

using nothing but a depth camera and raw flight state. No maps. No rules. No shortcuts.

Table of Contents

Delivery, inspection, search and rescue — autonomous drones are being deployed everywhere, but the AI behind them is still developed behind closed doors. There's no standard way to measure if one flight policy is better than another.

Swarm changes that. It's an open benchmark that puts every model on equal footing: 1,000 procedurally generated worlds, containerized evaluation, and a public leaderboard. No data leaks, no memorization — just raw skill.

The rules are simple:

- Your model gets a 128×128 depth image and a state vector

- It outputs velocity commands to fly the drone

- It has 60 seconds to navigate to a landing platform

- It must do this across cities, mountains, warehouses, forests, open terrain, and more — environments it has never seen before

The best model wins. That's it. Powered by the Bittensor network (Subnet 124).

Third-person view |

FPV — what the drone sees |

No GPS. No pre-built map. Just a depth camera and learned instincts.

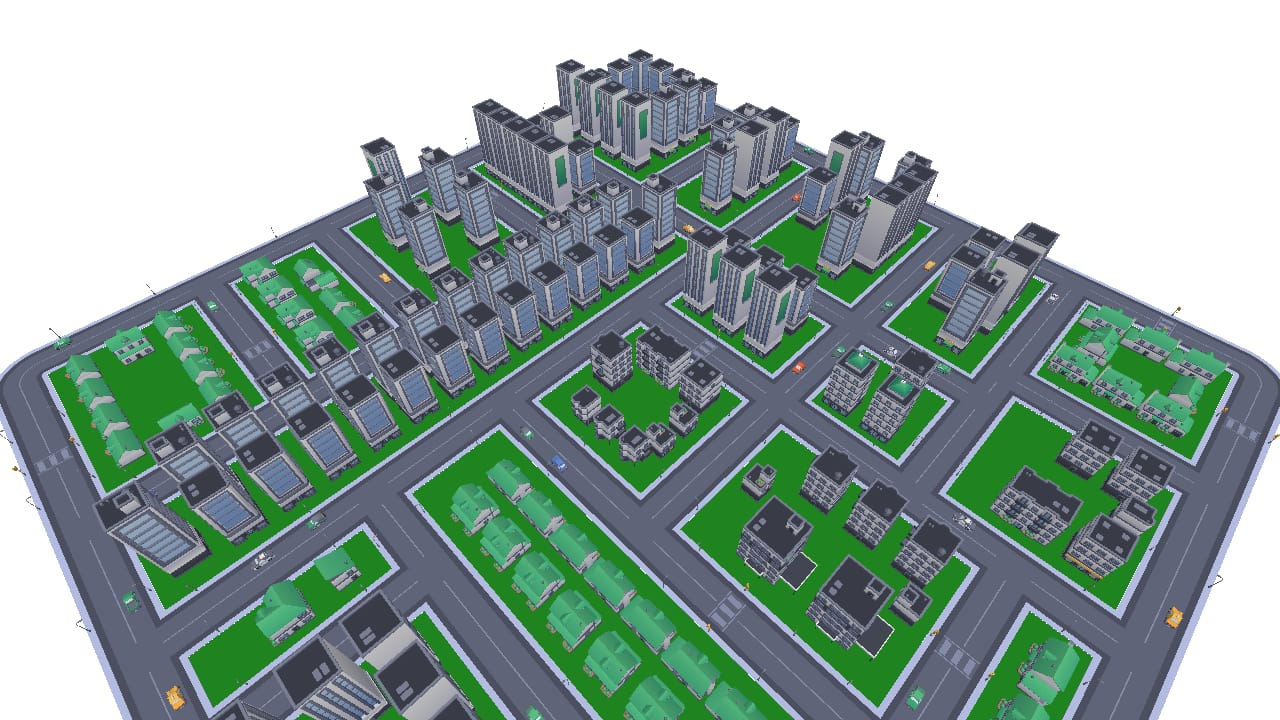

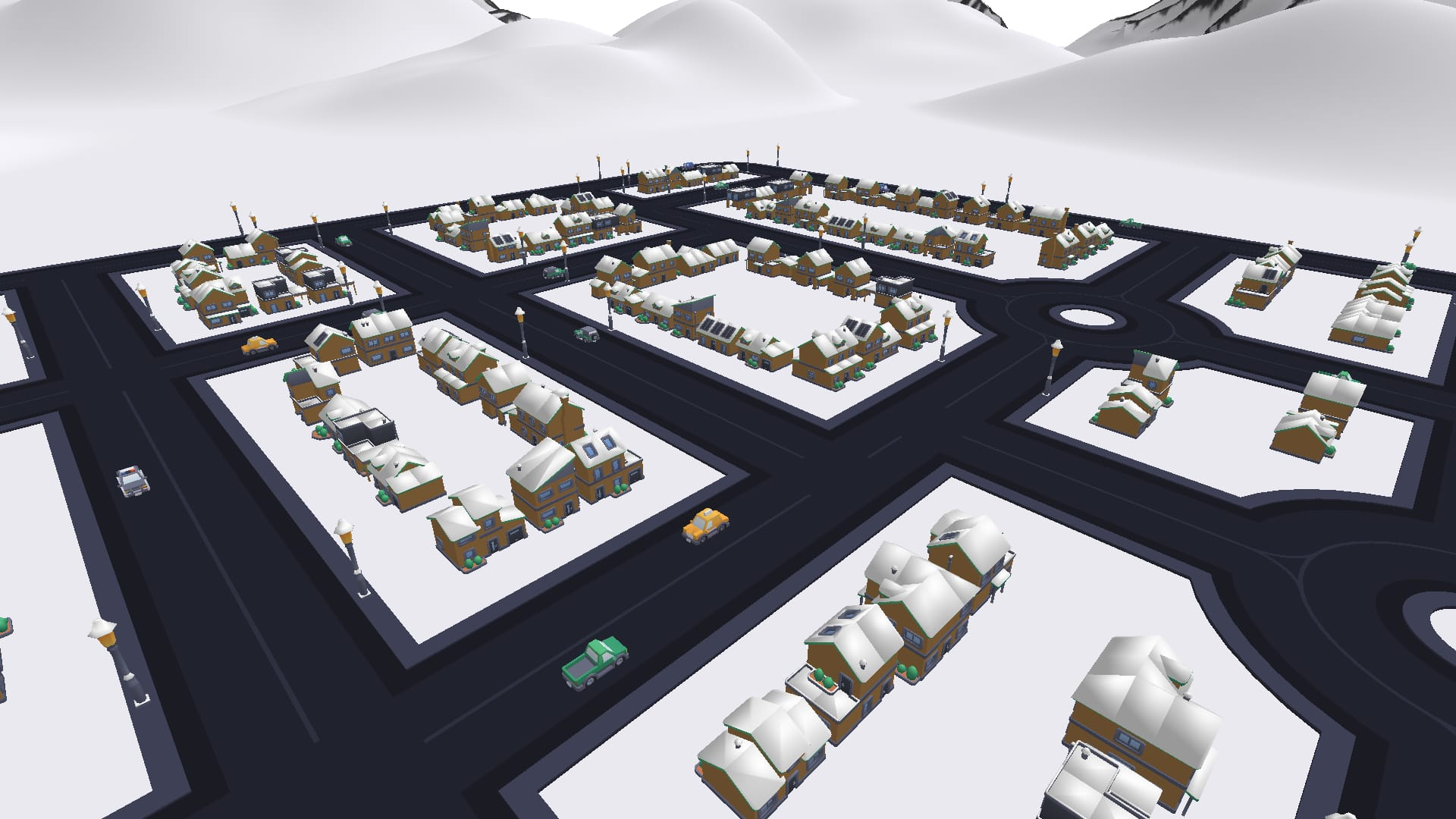

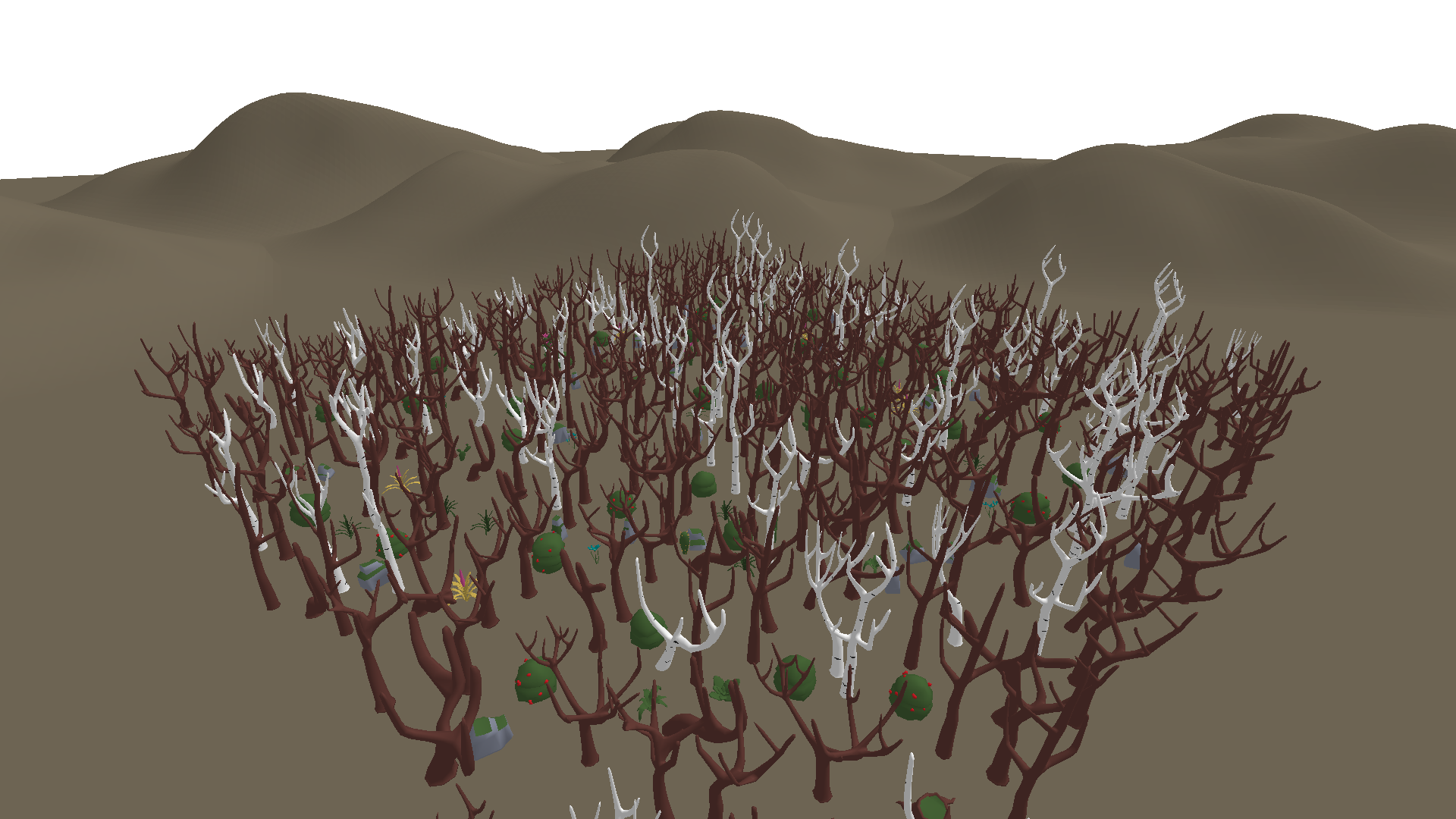

Every benchmark run generates unique worlds. Six environment types test completely different navigation skills — tight urban corridors, open-air precision, mountain terrain, village streets, indoor obstacle courses, and dense forests.

City — dense streets, buildings, intersections |

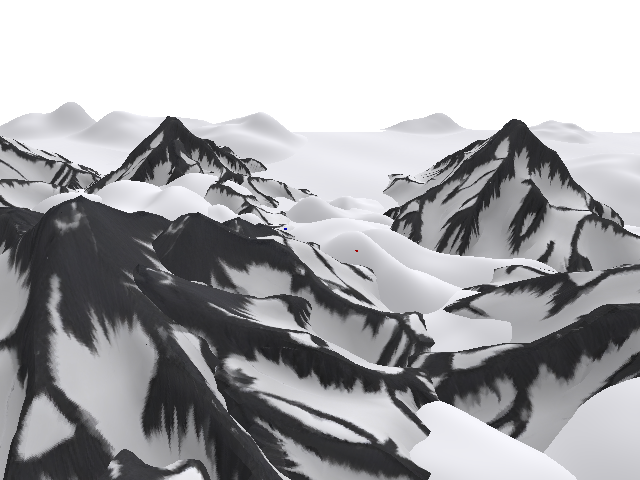

Ski Village — snow-roofed buildings, mountain backdrop |

Mountains — procedural terrain, peaks and valleys |

Warehouse — indoor, racks, cranes, 12m ceiling |

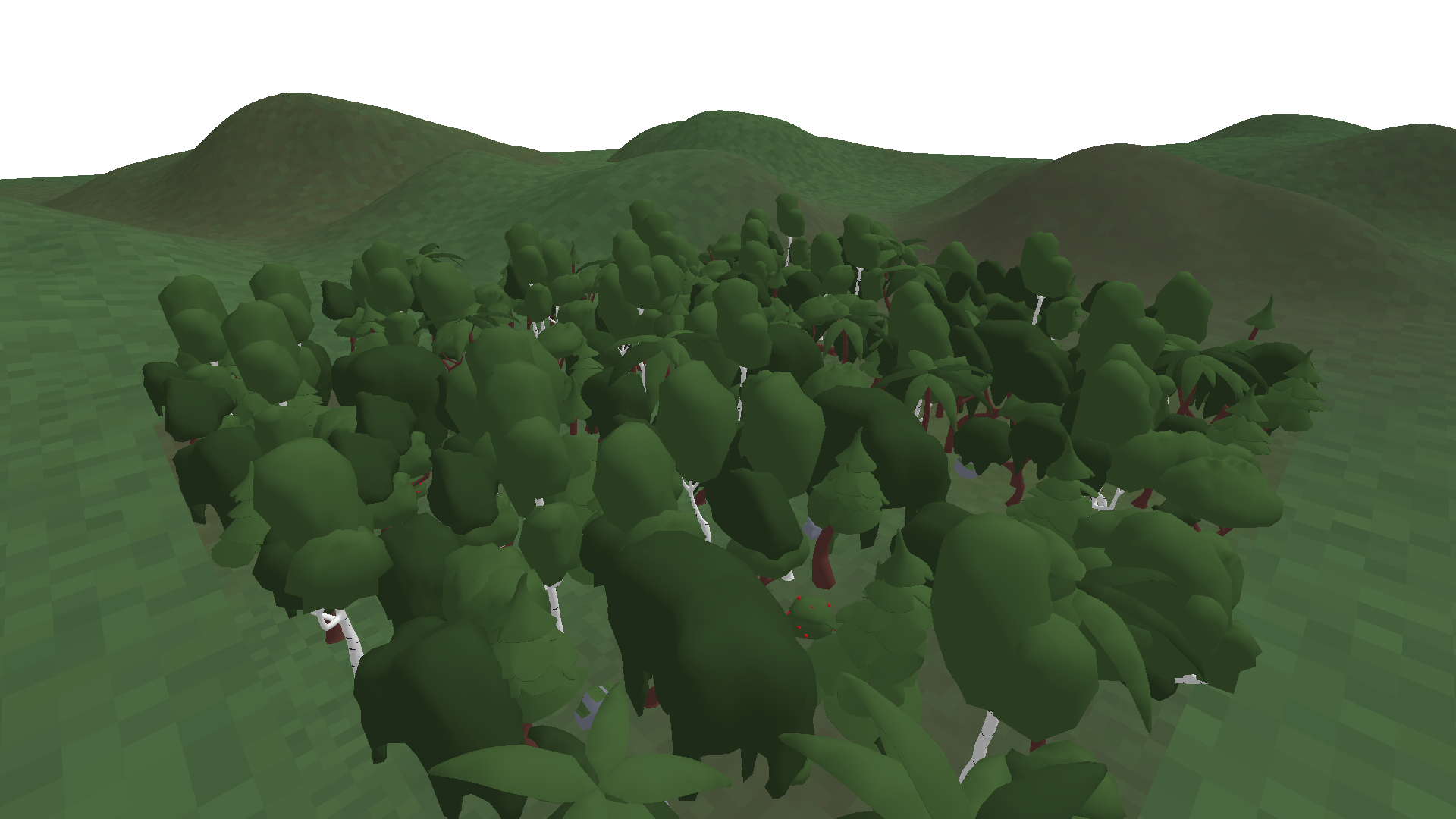

Normal — green canopy, full foliage |

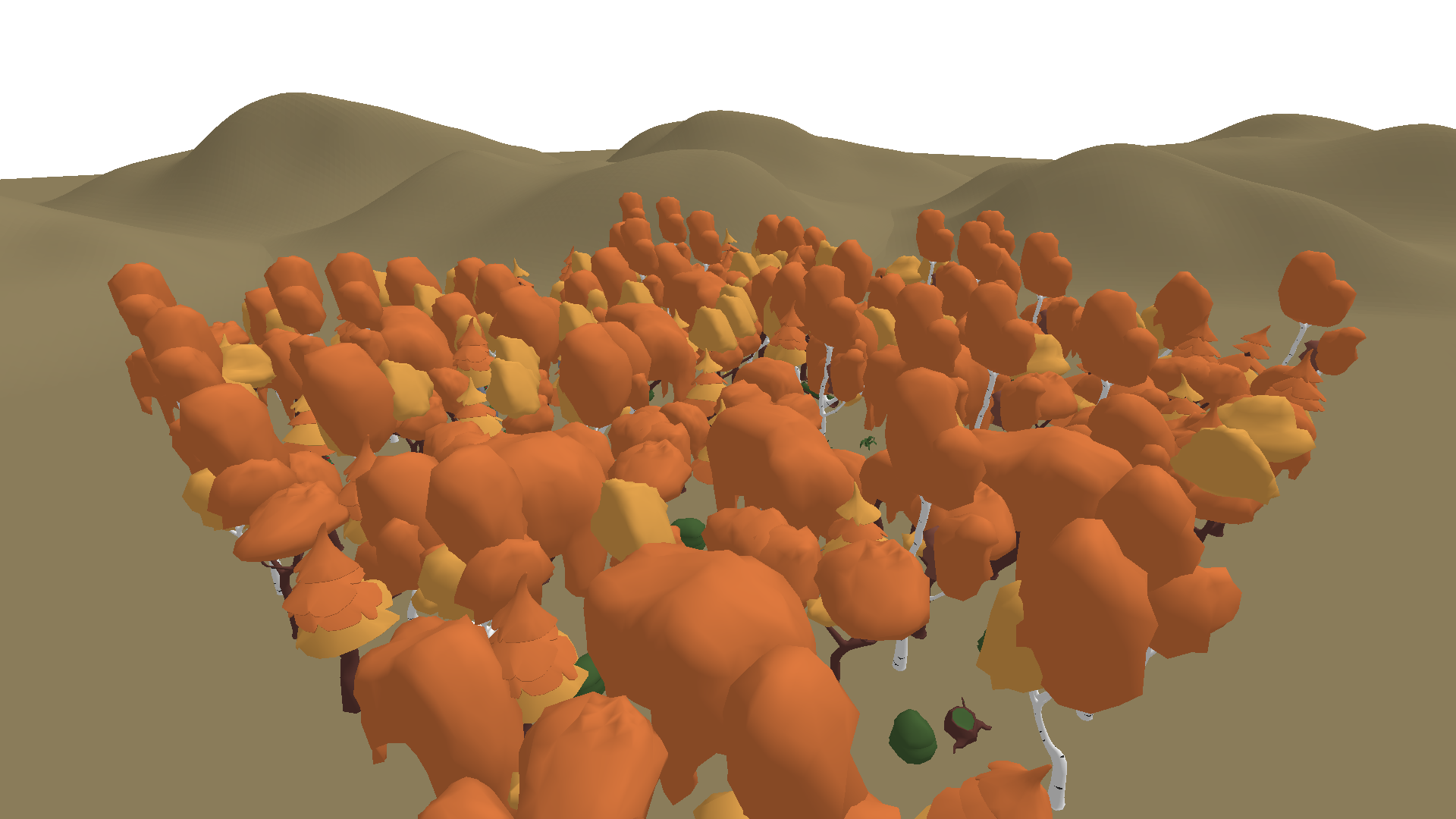

Autumn — orange and brown tones |

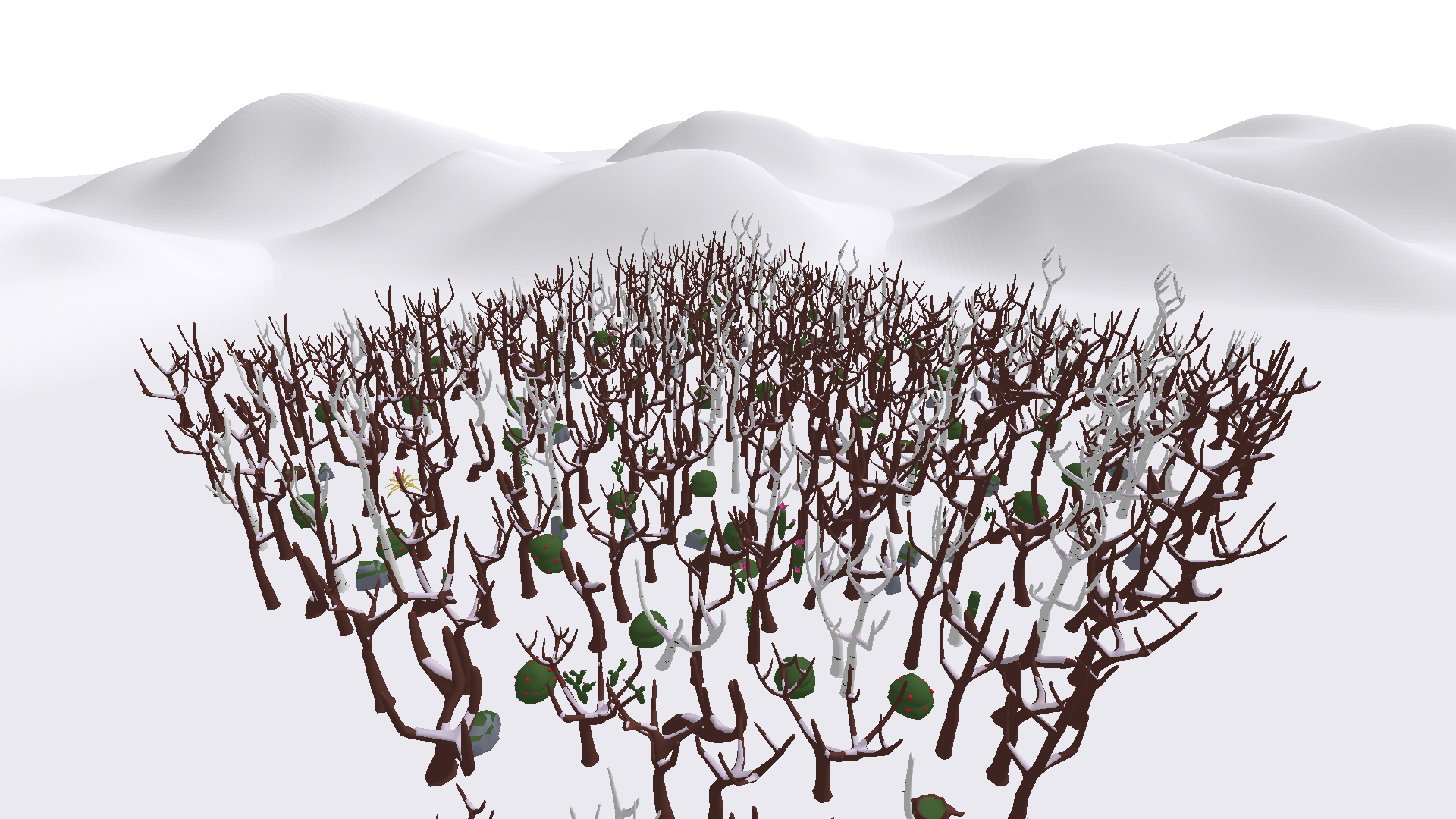

Snow — white terrain, bare branches |

Dead — no leaves, dark ground |

1,000 unique seeds per epoch — 6 environment types, each procedurally generated with unique layouts every run.

Develop, test, and benchmark your model without ever leaving the terminal.

pip install -e .Once published on PyPI:

pip install swarm-benchmarkswarm doctor # Check environment readiness

swarm model test --source my_agent/ # Validate source folder

swarm model package --source my_agent/ # Bundle into Submission/submission.zip

swarm model verify --model Submission/submission.zip # Verify structure and compliance

swarm benchmark --model Submission/submission.zip --workers 4 # Run benchmark

swarm benchmark --model Submission/submission.zip --seeds-per-group 1 # Quick test

swarm report # View results

Most commands support --json for CI/CD pipelines. Full docs: CLI reference.

| Depth Camera 128×128 image |

→ Your Model → | Flight Commands [dir_x, dir_y, dir_z, speed, yaw] |

→ Drone |

| State Vector position · velocity · orientation |

Your model receives a depth image and flight state at 50 Hz. It outputs 5D velocity commands. The drone has 60 seconds to navigate to a landing platform and touch down safely.

There are no waypoints, no GPS, no obstacle coordinates. The model must learn to read the depth image and react — just like a real pilot would.

| Component | Weight | What It Measures |

|---|---|---|

| Success | 45% | Did the drone land on the platform? |

| Speed | 45% | How fast, relative to the time limit? |

| Safety | 10% | Minimum clearance from obstacles during flight |

score = 0.45 × success + 0.45 × time + 0.10 × safety

Ranking is by average score across 1,000 seeds. No lucky runs — you need consistency. New models must pass a screening gate (champion score + 0.015) before running the full benchmark.

Seeds rotate every 7 days (Monday 16:00 UTC). Each validator generates its own 1,000 seeds per epoch. All seeds are published on swarm124.com for transparency.

|

Build a drone pilot from zero. The Miner Guide covers the agent interface, CLI workflow, submission format, and how to push to the leaderboard.

|

Evaluate models on your hardware. The Validator Guide covers Docker setup, PM2 launch, and auto-updates.

|

The models trained here don't stay in simulation.

Langostino is the open-source drone we built to prove it — ROS2, Raspberry Pi, INAV, 3D-printed parts. Full assembly guide and bill of materials included. Anyone can build one.

Train in Simulation → Compete on the Leaderboard → Deploy on Real Hardware

Distributed under the MIT License. See LICENSE for details.