-

Notifications

You must be signed in to change notification settings - Fork 33

[PERSISTENCE] SavePoints and Rollbacks design document (Issue #493) #533

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Changes from 16 commits

551f2e3

9743e87

5f38c9d

6d63f0e

cfac748

dbd741c

a7e5f7b

8e7b199

fc71f4e

0e6af93

949b4bf

2b3ec75

28f8fa5

045454b

a5f6d18

8030dca

767235d

9a1a6f1

File filter

Filter by extension

Conversations

Jump to

Diff view

Diff view

There are no files selected for viewing

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,154 @@ | ||

| # Savepoints and Rollbacks Design <!-- omit in toc --> | ||

|

|

||

| This document is a design guideline that will be used as a technical reference for the implementation of the `Savepoints` and `Rollbacks`. | ||

|

|

||

| The entry points will be in the `Persistence` module but there are going to be points of integration with `Utility` as well. | ||

|

|

||

|

|

||

| - [Background](#background) | ||

| - [Data Consistency (the "C" in CAP Theorem)](#data-consistency-the-c-in-cap-theorem) | ||

| - [Definitions](#definitions) | ||

| - [Savepoints](#savepoints) | ||

| - [Snapshots](#snapshots) | ||

| - [Rollbacks](#rollbacks) | ||

| - [Minimum Viable Product](#minimum-viable-product) | ||

| - [Long-term (🚀 🌔) ideas](#long-term---ideas) | ||

| - [Savepoints](#savepoints-1) | ||

| - [Rollbacks](#rollbacks-1) | ||

| - [Further improvements](#further-improvements) | ||

| - [Random thoughts](#random-thoughts) | ||

|

|

||

| ## Background | ||

|

|

||

| At the time of writing, it seems that we identified the points within the codebase in which we should take some action to support savepoints and rollbacks. | ||

| This means that we probably know the **WHEN**s and **WHERE**s, the scope of this document is to identify the **WHAT**s and **HOW**s. | ||

|

|

||

| It might sound simple, but the ability to recover from a failure that would prevent the node from committing a block deterministically is a critical feature, and it's a non-trivial problem to solve. | ||

|

|

||

| As it stands we use multiple data stores (please refer to [PROTOCOL_STATE_HASH.md](../PROTOCOL_STATE_HASH.md) for additional information about their designs): | ||

|

|

||

| | Component | Data Type | Underlying Storage Engine | | ||

| | --------------------- | ------------------------------------- | --------------------------------- | | ||

| | Data Tables | SQL Database / Engine | PostgresSQL | | ||

| | Transaction Indexer | Key Value Store | BadgerDB | | ||

| | Block Store | Key Value Store | BadgerDB | | ||

| | Merkle Trees | Merkle Trie backed by Key-Value Store | BadgerDB | | ||

|

|

||

| Something worth mentioning specifically about `Merkle Trees` is the fact that we store a separate tree for each `Actor` type (i.e. `App`, `Validator`, `Fisherman`, etc.), for `Accounts` & `Pools` and for the data types such as `Transactions`, `Params` and `Flags`. | ||

|

|

||

| This means that each tree is a separate data store. | ||

|

|

||

| ### Data Consistency (the "C" in CAP Theorem) | ||

Olshansk marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| We cannot make the assumption, especially in this day and age, but in the simplest case, we could very much have a monolithic setup where the node is running on the same machine as the `PostgresSQL` database and the `BadgerDB` key-value stores, but that would not change the fact that we are dealing with a distributed system since each one of these components is a separate process that could fail independently. | ||

|

|

||

| Imagine a scenario in which the state is persisted durably (what happens after an sql `COMMIT` statement) to the `PostgresSQL` database but one/some of the `BadgerDB` key-value stores fails due to storage issues. What would happen next? That's **non-deterministic**. | ||

|

|

||

| Even a single node is a small distributed system because it has multiple separate components that are communicating with each other (on the same machine or via unreliable networks). | ||

deblasis marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| Since a node is part of a distributed network of nodes that are all trying to reach consensus on the "World State", we have to make sure that the data that we are storing is consistent internally in the first place. | ||

|

|

||

| In the event of a failure at any level during this process, we cannot commit state non-atomically. That would make the node inconsistent and put it in a non-deterministic state that is not recoverable unless we have a way to rollback to a previous, clean, state with all the implications that this would have on the network. (social coordination comes to mind) | ||

|

|

||

| We either **succeed** across **all** data stores or we **fail** and we have to be able to **recover** from that failure like if **nothing has happened**. | ||

deblasis marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

|

|

||

| The following diagram illustrates the high-level flow: | ||

|

|

||

| ```mermaid | ||

| flowchart TD | ||

| NewBlock[A Block was just committed\nor we are at Height 0] -->CreateSavepoint(Create SavePoint) | ||

| CreateSavepoint --> UpdatingState(We are creating\na new block.\nUpdating the State) | ||

| UpdatingState --> ShouldCommit | ||

| ShouldCommit{Should we commit?} --> |Yes| ReceivedOKFromDatastores{Have all\nthe datastores\ncommitted\nsuccessfully?} | ||

| ShouldCommit --> |No| NewBlock | ||

| ReceivedOKFromDatastores -->|Yes| Committed[Committed successfully] --> |Repeat with\nthe new Block| NewBlock | ||

| ReceivedOKFromDatastores -->|No, we have at least one failure| Rollback[Rollback] --> |Repeat with\nthe rolled back Block | NewBlock | ||

| ``` | ||

|

|

||

| ## Definitions | ||

|

|

||

| ### Savepoints | ||

|

|

||

| A savepoint is either the beginning of a database transaction (or distributed transaction) created right after a successful commit happened that allows recreating a perfect copy of the state at the time it was created. | ||

|

|

||

| ### Snapshots | ||

|

|

||

| A snapshot is an artifact that encapsulates a savepoint. In V0 terms, it would be a shareable copy of the data directory. In V1 terms it's going to be a compressed archive that once decompressed and loaded into the node, it allows us to recover the state of the node at the height at which the snapshot was created. | ||

|

|

||

| ### Rollbacks | ||

|

|

||

| A rollback is the process of cleanly reverting the state of the node to a previous state that we have saved in a savepoint. | ||

Olshansk marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| ### Minimum Viable Product | ||

|

|

||

| After having examined the `Persistence` and `Utility` modules, I have identified the following areas that we can consider as part of the MVP, these could be used as individual Github issues/deliverables potentially: | ||

|

|

||

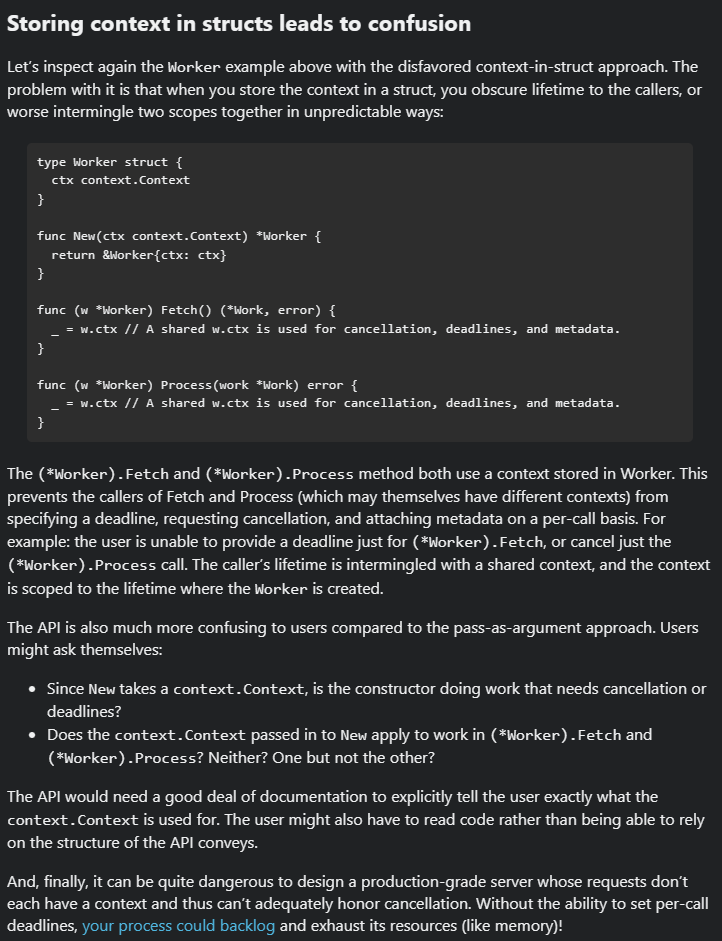

| - [**State changes invalidation and rollback triggering**] We need some sort of shared and thread-safe reference that is available for us across the whole call-stack that we can use in the event of a failure to flag that we need to abort whatever we are doing and rollback. This could be achieved via the use of the [context](https://pkg.go.dev/context) package. | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. I'm in favor of the general idea of how to integrate/propagate/share a native Go context throughout the state if you have ideas in mind. The only thing I wanted to raise is that we have a

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Fair point. I was initially thinking about embedding the go

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. This is interesting and makes a ton of sense. I completely agree that having The very first thing we did though had a struct called Don't have a strong opinion if we should just use

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Devil's advocate: What if what everyone else is doing is wrong because they are all copying each other? (I should be spending more time looking at other projects, unfortunately I don't have good or bad examples, just assuming) Or maybe sometimes a pattern makes sense but when applied it can get "lost in translation". It makes me think about this: Just kidding. The context is usually used to define the lifetime boundaries of what normally is an HTTP request. In our case the "request" is effectively a "tentative world state transition application to the local state subject to byzantine consensus and unexpected errors".

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. I had to tweet this: https://twitter.com/olshansky/status/1644090374242332672 |

||

|

|

||

| - [**Ensure atomicity across data stores**] We need to make sure that we are using transactions correctly and that we are not accidentally committing state ahead of time in any of the data-stores. | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Some context to keep in mind. Remember that postgtres is like a view into the key-value stores. The state hash (the integrity) is the combination of all the tree roots. Postgres is what we use for business logic operations so we don't continuously get protos, unmarshal them, load them into memory, etc... Here's Cosmos' mega thread: cosmos/cosmos-sdk#8297

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Yeah, of course. I would add that Postgres is a "historicized" view of the KV stores at different heights. That's why it's important to keep them in sync.

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Indexed may be the wrong term here but also related IMO. Fwiw, companies like https://www.covalenthq.com/ are basically (I'm WAYYYY oversimplifying) huge relational DBs.

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Yeah, I guess Since :) |

||

|

|

||

| - [**Distributed commits across data-stores**] We need to implement a 2PC (two-phase commit) or 3PC (three-phase commit) protocol that should make sure that the state has been committed safely on **all** data stores before it is considered `valid`. | ||

|

|

||

| - [**Savepoints**] The simplest version could be the database transaction that can simply be discarded, basically the uncommitted transaction sits in memory until it's flushed to storage and rolling back to a savepoint would be as simple as discarding the non-pristine version of the state in memory. | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. The issue I see is that iin order to validate the StateHash (as a validator or full node) or create a new one (as a proposer), we need to:

What if:

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Just to make sure I understand, here's an example of what I envisage:

Am I missing something?

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. We're on the same page. Just extra 💭 below. Usage:

Tools available:

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. 💯 |

||

|

|

||

| - [**Rollbacks**] Rolling back to a savepoint would mean not only that the state has been restored to the previous savepoint but also that the node has to go back into a state that allows it to proceed with its normal operation (i.e. all the modules should behave as if nothing has happened) | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

+1 Are you thinking the FSM might be able to help with that?

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Yeah, potentially. If we did/do things well, the state should be completely a We might encounter something that needs refactoring along the way but that would be tech debt that we have to pay back I guess.

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. LOL I have so much tech debt accumulated in multiple countries that one day or another, with the rise of AI, it will become sentient and come after me. 😨 |

||

|

|

||

| - [**Extensive testing**] We need to make sure that we have a good test coverage for all the above scenarios and that we can simulate failures in a controlled environment. | ||

Olshansk marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| - [**Tooling**] The CLI should provide ways to create savepoints and rollbacks. i.e.: `p1 persistence rollback --num_blocks=5` | ||

|

|

||

| ### Long-term (🚀 🌔) ideas | ||

|

|

||

| Apart from internal failures that should resolve themselves automatically whenever possible, nodes might require a way to save their state and restore it later, not necessarily at the previous block height. This could be useful for a number of reasons: | ||

|

|

||

| - To allow nodes to recover from an unforeseen crash (bugs) | ||

| - To facilitate socially coordinated rollbacks of the chain to a specific block height in the case of a consensus failure/chain-halt | ||

Olshansk marked this conversation as resolved.

Show resolved

Hide resolved

|

||

| - To improve operator experience when managing and upgrading their fleet of nodes | ||

deblasis marked this conversation as resolved.

Show resolved

Hide resolved

|

||

| - Governance transactions that enable rolling back state subsets | ||

| - And more... | ||

|

|

||

| Performing operations like: copying datadirs, compressing them, etc. is probably not the best approach. | ||

| Having a first-class support for savepoints and rollbacks would be, IMHO, a much better solution. | ||

|

|

||

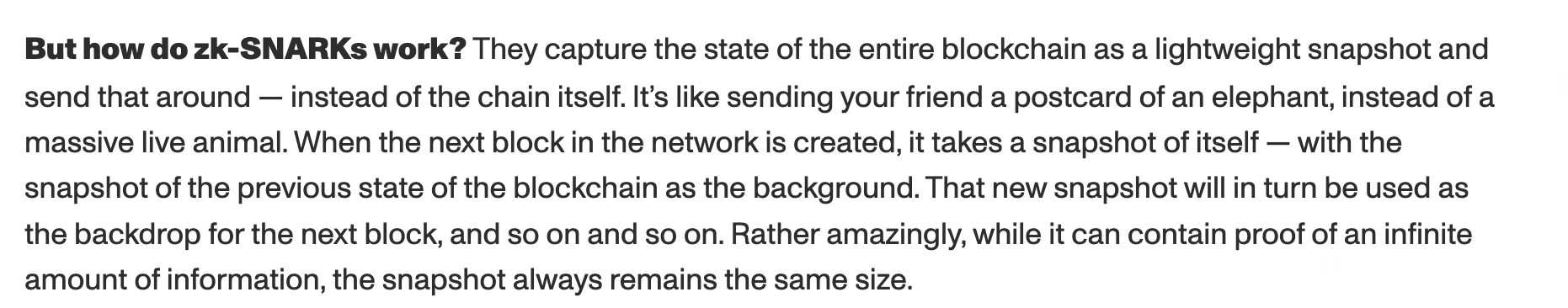

| A more advanced/flexible version of **Savepoints** could be to efficiently serialize the state into an artifact (**Snapshot**) (non-trivial also because we have multiple data-stores but definitely possible), maybe leveraging previous savepoints to only store the changes that have been made since the last one and/or using some version of a WAL (Write-Ahead log that records the changes that happened). | ||

|

|

||

| **Snapshot** hash verification using the `genesis.json` file and [Mina protocol](https://minaprotocol.com/lightweight-blockchain) | ||

|

|

||

| #### Savepoints | ||

|

|

||

| A savepoint must have the following properties: | ||

|

|

||

| - It must be able to be created at any block height | ||

| - It must be self-contained and/or easy to move around (e.g. a file/archive) | ||

| - The action of creating a savepoint must be atomic (i.e. it must be impossible to create a savepoint that is incomplete) | ||

| - The action of creating a savepoint must be easy to perform (e.g. a CLI command and/or a flag being passed to the node binary when starting it) | ||

| - The action of creating a savepoint must be as fast as possible | ||

| - The operator should be informed with meaningful messages about the progress of the savepoint creation process (telemetry, logging and stdout come to mind) | ||

| - It should be, as much as possible, compact (i.e. zipped) to reduce the size and cost of disseminating snapshots | ||

| - A reduction in the snapshot size should be prioritized over its compression speed since it is an infrequent event | ||

| - It must have some form of integrity check mechanism (e.g. checksum/hash verification and even a signature that could be very useful in the case of a social rollback) | ||

|

|

||

| #### Rollbacks | ||

|

|

||

| The following are some of the properties that a rollback mechanism must have: | ||

|

|

||

| - If the operator requested a rollback, regardless of the internal state of the node, it must be able to rollback to the requested block height, provided a valid savepoint | ||

| - The rollback process must be atomic (i.e. it must be impossible to rollback to a block height has incomplete/invalid state) | ||

| - The rollback process must be easy to perform (e.g. a CLI command and/or a flag being passed to the node binary when starting it) | ||

| - The rollback process must be as fast as possible | ||

| - The operator should be informed in a meaningful way about the progress of the rollback process (telemetry, logging and stdout come to mind) | ||

|

|

||

|

|

||

| ### Further improvements | ||

|

|

||

| - Savepoints could be disseminated/retrieved using other networks like Tor/IPFS/etc., this would free-up bandwidth on the main network and could be used in conjunction with `FastSync` to speed up the process of bootstrapping a new node without overwhelming the `P2P` network with a lot of traffic that is not `Protocol`-related. This could be very important when the network reaches critical mass in terms of scale. Hopefully soon. | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. I see, you really do look at savepoints as snapshots. Do you see the solution for a node-specific savepoint (i.e. I updated one tree but not another and crashed) and a network wide (i.e. a data dir snapshot) as having the same solution?

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. I think it can very much build on top of what I have in mind. Similarly to what databases do, we'd need some It can very much rely on the statehash I guess... How? KISS, back of the envelope implementation:

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

This is what "weak subjectivity" is. From Vitalik's 2014 article

I think there are implementation nuances here, but I think you've got it. I think it's worth adding (in the moonshot section) that we can potentially use Mina to verify the snapshot hash using only the latest state and the

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

Music to my ears. I am going to read that article tonight. Thanks for sharing. 🙏

Added |

||

|

|

||

| For example: a fresh node could be looking for the latest `Savepoint` signed by PNI available, download it from Tor, apply its state and resume the normal sync process from the other nodes from there. | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

For example, Gohkan and I were talking about this yesterday: https://olshansky.medium.com/cryptocurrencies-its-all-about-incentive-77ac47a6adc4 We got the idea of having SuperValidators (i.e. higher stake, higher penalty, limited in number) that could help with bootstrapping, and also make the formal 2/3 agreement more flexible. Could be a HotPOKT innovation ;)

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Yeah, makes sense. Perhaps disseminating snapshots via Tor or other medium is something that I'd start doing as PNI (for example), then I'd look into offloading the responsibility to the protocol itself by creating the necessary incentives but first of all I'd like to see it working like a charm. Since snapshots/rollback could be seen also as a "disaster recovery" tool as well, I'd advice to use something that's decoupled from Pocket, or at least that can be interacted with in other ways in the case of complete network halt. I don't know why but I am thinking about Solana now :)

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Maybe add a "FiremanBoostrapper" or "Node911" as a moonshot idea :)

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. LOL, I'll leave that to you ;)

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Maybe even an IPFS hash that's stored directly in the block header |

||

|

|

||

| - Building on top of the latter, when it comes to socially-coordinated rollbacks, the DAO/PNI could designate a `Savepoint` as the one that node-operators should use to rollback to by advertising it somewhere (this could be done in several ways, using alternative decentralized networks/protocols is preferable for increased resiliency: IPFS, Ethereum smart-contract interaction, etc.). This would allow node-operators to be notified, even programmatically, without having to rely on `Discord` (which is not decentralized anyway...) or other ways of coordination in order to "get the news" about the latest `Savepoint` that they should use to rollback to. | ||

Olshansk marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| ## Random thoughts | ||

|

|

||

| - I wonder if serialized, compressed and signed `Merkle Patricia Trie`s could be leveraged as a media for storing `Savepoint`s in a space-efficient and "blockchain-native" way 🤔. | ||

|

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Note that we're not using Etheruem's Merkle Patricia Trie, but a modified version of Libra's Jellyfish Merkle Tree implemented by Celestia. The fact that we're using Trees, the root has a hash, and that hash is easily verifiable. If a node is synching from scratch, it's easy to verify the state transitions are correct. If a node is not synching from scratch, it needs to trust someone else. Mina protocol (which I don't really know) has a special property that if you have the genesis parameters (i.e. the json config) and ANY height, you can immediately (in O(1)) verify that it is correct. Might be worth looking into. https://olshansky.substack.com/p/5p1r-ethereums-modified-merkle-patricia

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Yeah, I was reading about Ethereum and I had a Freudian slip with Patricia, luckily Celestia is not jealous.... bad joke 😝 Noted.

Collaborator

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. |

||

|

|

||

Uh oh!

There was an error while loading. Please reload this page.