Make block time catchup proportional to drift#1557

Conversation

|

A couple other considerations / questions:

|

0b3df09 to

d3aa621

Compare

@PaulVMo I really believe that this requirement of at least 50k blocks is the key to solve this problem. While it may leads to larger snapshots, it is very easy to check (block hash in the consensus group) as pre BBA evaluation; by last it will guarantee a longer period of multiple sources in the network, improving its resilience. |

|

Thanks @tconrado. Requiring 50K blocks is a fairly hard change to enforce. It also adds a lot of time to sync a new validator instance. We recently had a discussion with evan from the core dev team and have come up with a more robust solution. We plan to have each consensus group member include their calculation for the target time for the next block into the block metadata. Then, each validator can select the median target from among all targets in the metadata for setting its block timer. This will allow a deterministic way to come up with the target block time and hopefully avoid uncontrollable penalties. We may also still include this change for calculating the target time as it will also lead to a bit more smooth block times. |

Goal: Reduce the variability of block times.

We have observed a few issues with block times and validators. First, it seems that many validators struggle to keep up with extremely fast block times (i.e. 50s). By ramping up with the catchup times more gradually, we can hopefully avoid the need for 50s blocks and thus the performance hit to validators that come with them.

Second, because many validator do not store at least 50K of block history, calculations of the target block time will vary between validators. Currently, this variability results in 9 or 10 second discrepancies between validator which may be resulting in missed BBAs for validators assuming the block time should be longer than the majority. By having more steps in the catchup ramp curve, we will hopefully see smaller difference between validators when we do see discrepancies and thus fewer missed BBAs.

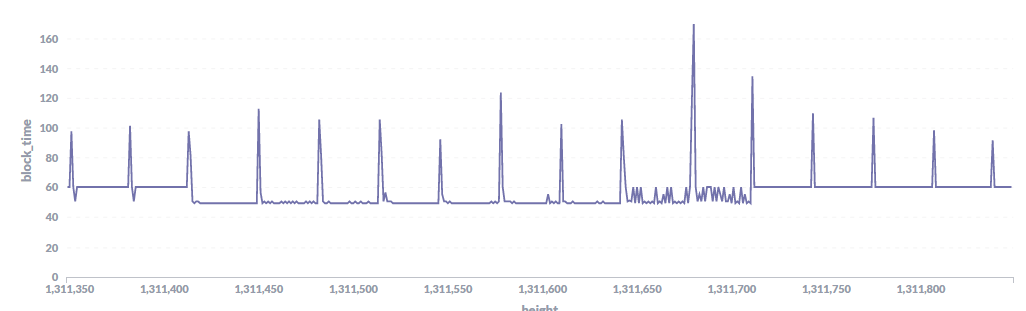

Lastly, we see large oscillations when the accumulated drift is close to the current threshold of 0.01 seconds (i.e. block times flipping back and forth between 60 or 61 seconds and 50 seconds). Ramping up and down the catchup amount proportionally will at minimum reduce the amplitude of such oscillations and possibly also decrease the frequency of oscillations.

Example of block time oscillations

Proposal: Adjust the catchup time function used to calculate block time adjustments to be proportional to the amount of drift. Currently, the adjustment jumps up from 1 second to 10 seconds above the threshold of 0.01 seconds of drift over the last 50K blocks. This approach instead steps up the adjustment in 1 second increments from 1 to 10 proportional to the amount of drift. For example, 0.01 second of drift will result in 1 second of catch up (as with the current approach). 0.02 seconds of drift will result in 2 seconds of catch up, 0.03 seconds of drift will result in 3 seconds of catch up, and so on up to the max of 10 seconds of block time adjustment.