optimization of index_select op backward#32955

Merged

Xreki merged 30 commits intoPaddlePaddle:developfrom Jul 20, 2021

Merged

Conversation

|

Thanks for your contribution! |

|

Sorry to inform you that d121f02's CIs have passed for more than 7 days. To prevent PR conflicts, you need to re-run all CIs manually. |

JamesLim-sy

reviewed

Jun 9, 2021

| @@ -11,47 +11,50 @@ | |||

| // WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| // See the License for the specific language governing permissions and | |||

| // limitations under the License. | |||

Contributor

There was a problem hiding this comment.

在Conversation的Comment区域要描述本次PR的目的,PR修改前后性能变化情况等信息。

Xreki

reviewed

Jul 5, 2021

| using Tensor = framework::Tensor; | ||

| using LoDTensor = framework::LoDTensor; | ||

| using DDim = framework::DDim; | ||

|

|

| auto input_dim_size = input_dim.size(); | ||

| auto output_dim = x_grad->dims(); | ||

| std::vector<T> out_vec(x_grad->numel(), 0); | ||

| std::memset(out_data, 0.0, x_grad->numel() * sizeof(T)); |

Contributor

There was a problem hiding this comment.

可以用SetConstant,另外初始化为0的部分,放到L196后的for循环里面,每次初始化一部分,对cache是不是友好些?

JamesLim-sy

reviewed

Jul 5, 2021

| }; | ||

|

|

||

| template <typename T> | ||

| struct IndexSelectAdd< |

Contributor

There was a problem hiding this comment.

这一段仿函数的意义感觉不大,感觉除了浮点之外采用的是下述通用形式。

template <typename platform::cpu_isa_t isa, typename T, class Enable = void>

struct IndexSelectAdd {

void operator()(int n, const T* src, T* dst) {

for (int i = 0; i < n; i++) {

dst[i] += src[i];

}

}

};

JamesLim-sy

reviewed

Jul 14, 2021

| @@ -194,8 +220,8 @@ class IndexSelectGradKernel : public framework::OpKernel<T> { | |||

| if (dim < 0) { | |||

Contributor

There was a problem hiding this comment.

Line212 - Line219 可以改成:

auto *x_grad = ctx.Input<framework::LoDTensor>("X");

auto *index = ctx.Input<framework::LoDTensor>("Index");

auto *out_grad = ctx.Output<framework::LoDTensor>("Out");

| }; | ||

|

|

||

| template <typename T, typename IndexT = int> | ||

| #if ((!defined __NVCC__) && (!defined __HIPCC__)) |

JamesLim-sy

reviewed

Jul 14, 2021

| void operator()(const framework::ExecutionContext& ctx, int slice_size, | ||

| const T* src_pointer, const T* p_pointer, T* dist_pointer) { | ||

| auto blas = math::GetBlas<DeviceContext, T>(ctx); | ||

| blas.VADD(slice_size, src_pointer, p_pointer, dist_pointer); |

Contributor

There was a problem hiding this comment.

使用的blas的时候,可以测一下不同OMP设置情况下的加速比。

Xreki

approved these changes

Jul 20, 2021

| template <typename DeviceContext, typename T, typename IndexT = int> | ||

| void IndexSelectGradInner(const framework::ExecutionContext& context, | ||

| const LoDTensor& out_grad, const LoDTensor& index, | ||

| const LoDTensor* out_grad, const LoDTensor* index, |

Contributor

There was a problem hiding this comment.

不要修改参数的类型,不用修改的输入用const Tensor&类型。

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

PR types

Performance optimization

PR changes

OPs

Describe

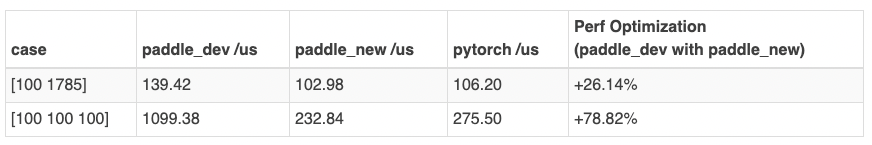

Optimization of index_select op backward, the optimized data as follows:

Compared with the origin and pytorch, optimization measures have been improved.