-

Notifications

You must be signed in to change notification settings - Fork 5.9k

Add batch_norm and layer_norm XPU kernels #27818

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Add batch_norm and layer_norm XPU kernels #27818

Conversation

test=develop, test=kunlun

|

Thanks for your contribution! |

|

@QingshuChen 麻烦帮忙review下哈 |

|

|

||

| def test_check_grad(self): | ||

| self.check_grad_with_place( | ||

| paddle.XPUPlace(0), ['X'], 'Y', max_relative_error=0.02) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

| pass | ||

|

|

||

| def test_check_output(self): | ||

| self.check_output_with_place(paddle.XPUPlace(0), atol=1e-4) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

| for id, name in enumerate(fetch_list): | ||

| self.assertEqual( | ||

| np.allclose( | ||

| outputs[name], outs[id], atol=1e-4), True) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

QingshuChen

left a comment

QingshuChen

left a comment

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@QingshuChen 麻烦帮忙review下哈

LGTM

|

|

||

| def test_check_grad(self): | ||

| self.check_grad_with_place( | ||

| paddle.XPUPlace(0), ['X'], 'Y', max_relative_error=0.02) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

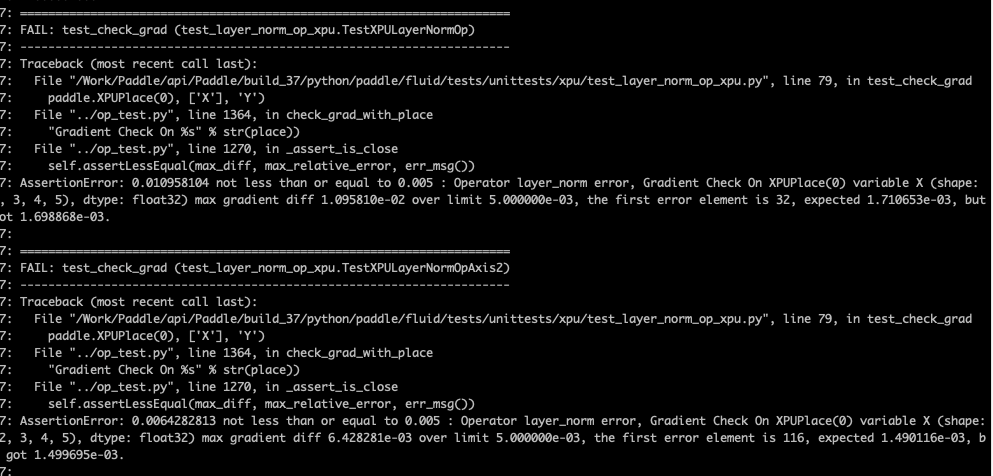

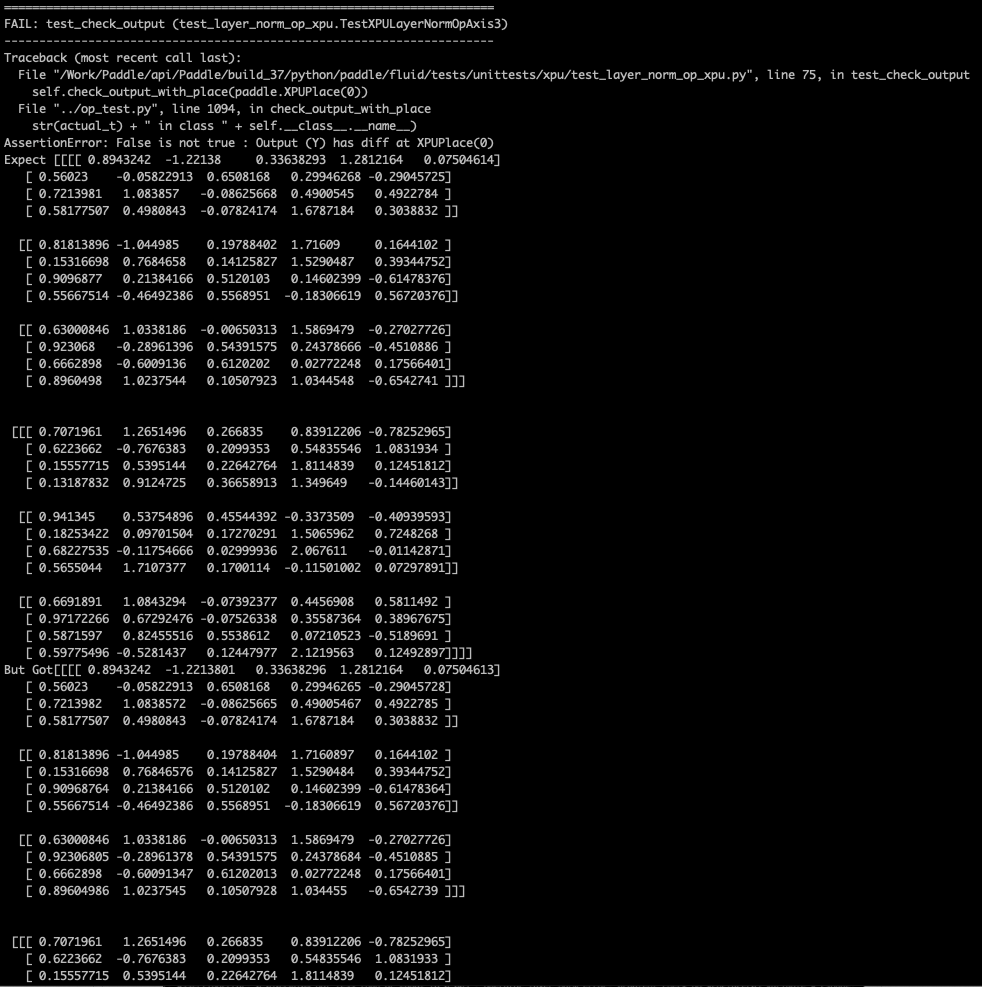

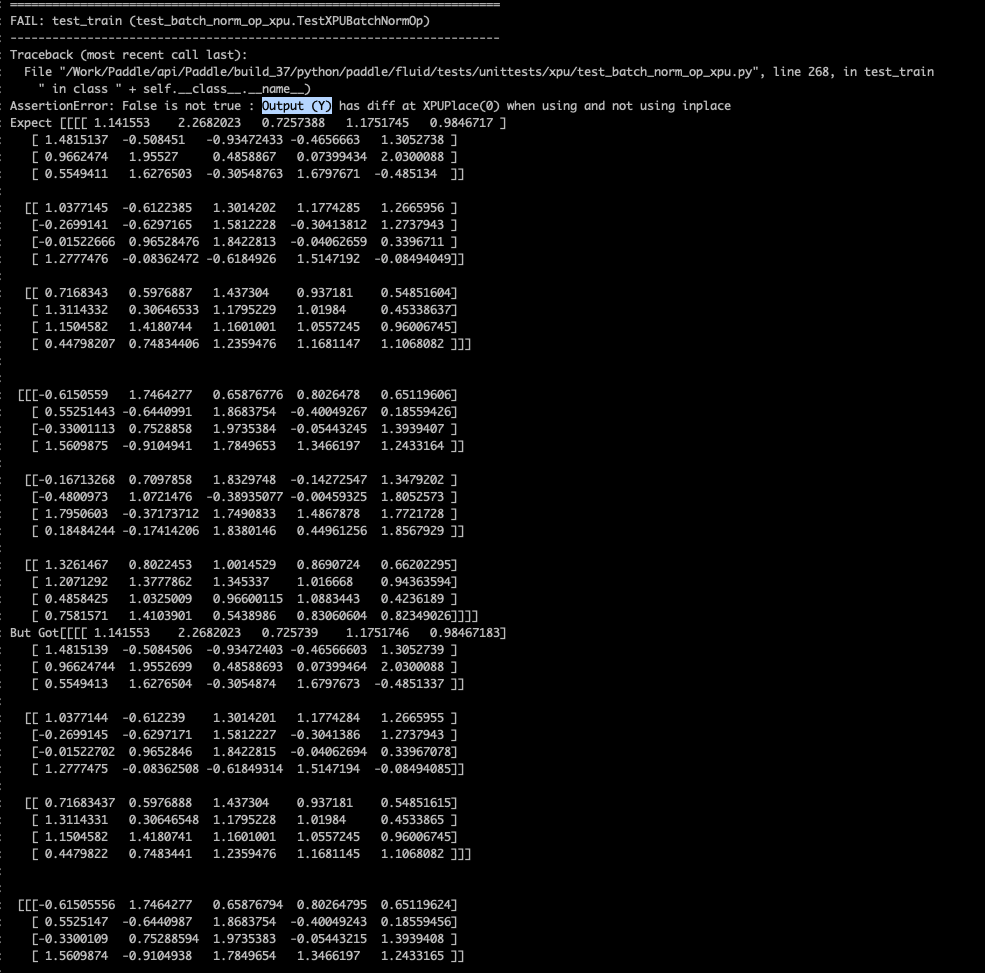

这边用默认值可以不?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

不行的,超过了默认值0.005,具体可以见我的comments中的截图

Xreki

left a comment

Xreki

left a comment

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

LGTM for the change of atol and max_relative_error.

PR types

New features

PR changes

OPs

Describe

support batch_norm and layer_norm on Baidu Kunlun