[Dy2stat] Support InputSpec and Return callable class instance in @declarative#25960

Merged

Aurelius84 merged 42 commits intoPaddlePaddle:developfrom Aug 27, 2020

Merged

[Dy2stat] Support InputSpec and Return callable class instance in @declarative#25960Aurelius84 merged 42 commits intoPaddlePaddle:developfrom

Aurelius84 merged 42 commits intoPaddlePaddle:developfrom

Conversation

|

Thanks for your contribution! |

|

✅ This PR's description meets the template requirements! |

chenwhql

previously approved these changes

Aug 27, 2020

liym27

previously approved these changes

Aug 27, 2020

lanxianghit

previously approved these changes

Aug 27, 2020

e339095

liym27

approved these changes

Aug 27, 2020

chenwhql

approved these changes

Aug 27, 2020

lanxianghit

approved these changes

Aug 27, 2020

raindrops2sea

approved these changes

Aug 27, 2020

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

PR types

New features

PR changes

APIs

Describe

@declarative升级为返回callable的类对象InputSpec概念,支持设置input_spec进行动转静What's New?

1.

declarative返回callable类对象1.1 背景介绍

此前版本的

@declarative装饰函数后,仅返回callable的另一个函数。其接收传递过来的*args,**kwargs参数,执行内部cache的program,返回结果。基本逻辑如下:此方案可以满足大部分场景,但具有一定的局限性:

@declarative无法支持其他参数,接口功能扩展性较差__impl__函数而非类对象,不能友好地暴露属性访问的相关接口ProgramTranslator进行使用,增加了熟悉框架新概念的成本因此,此PR升级了

declarative接口,以类对象作为返回。1.2 新功能使用

foo访问属性如上示例,获取被装饰Function的code、program信息,均不依赖ProgramTranslator。 接口使用方式更加简洁、易用。且每个函数单独持有一份ProgramCache,避免交叉。

2. 新增支持

InputSpec2.1 背景介绍

此前

@declarative装饰器不支持任何额外的的参数。若要获取转换后的Program,则需要显式的执行一次前向:此方案具有如下局限,或不易用之处:

编译式转换此PR新增了

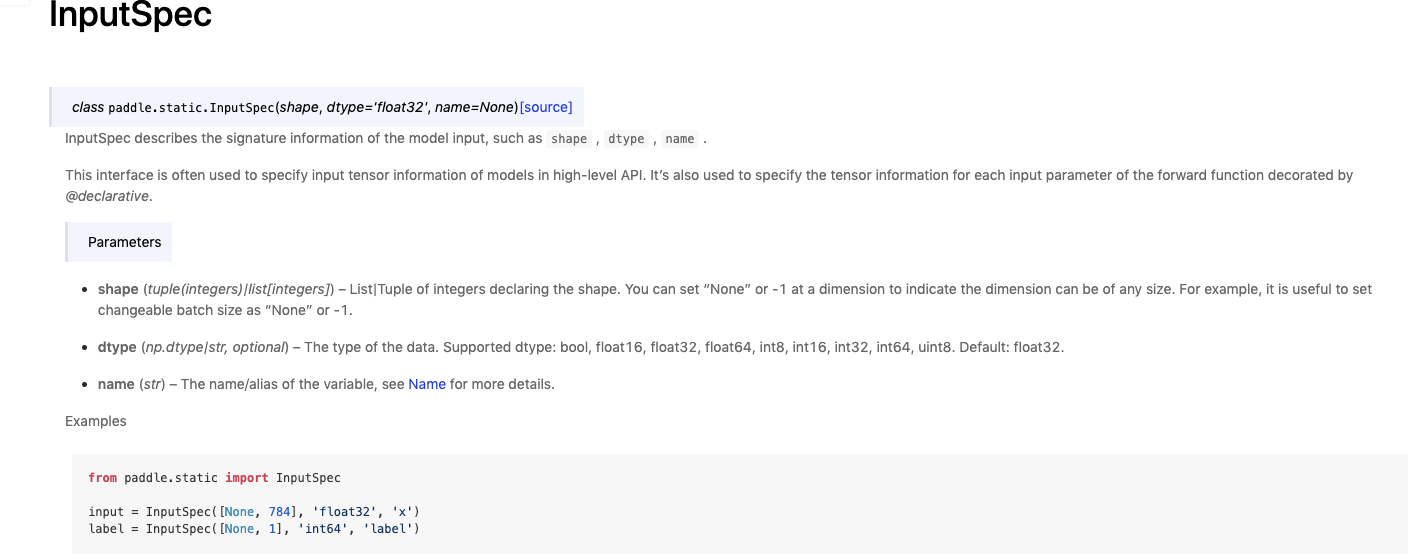

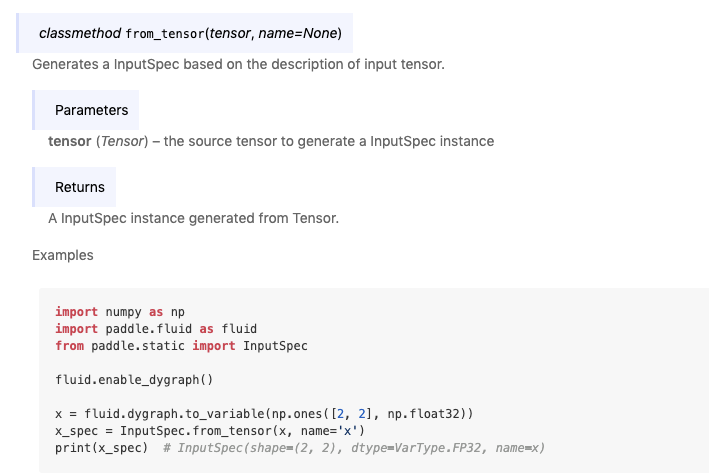

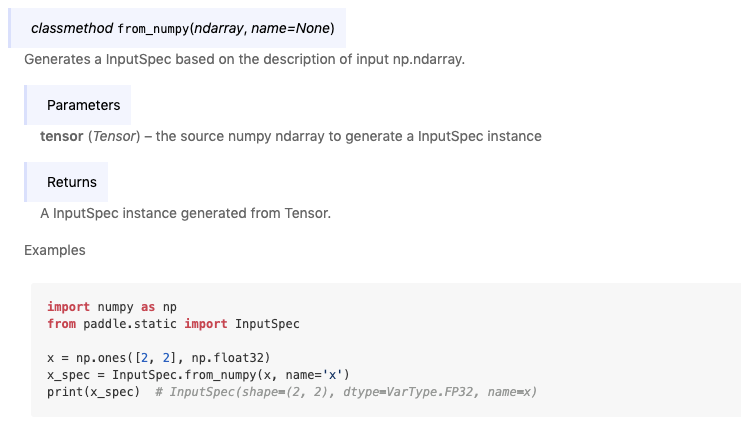

InputSpec类,并重构了@declarative逻辑,支持input_spec参数来指定feed layer的shape、name信息。2.2 InputSpec类

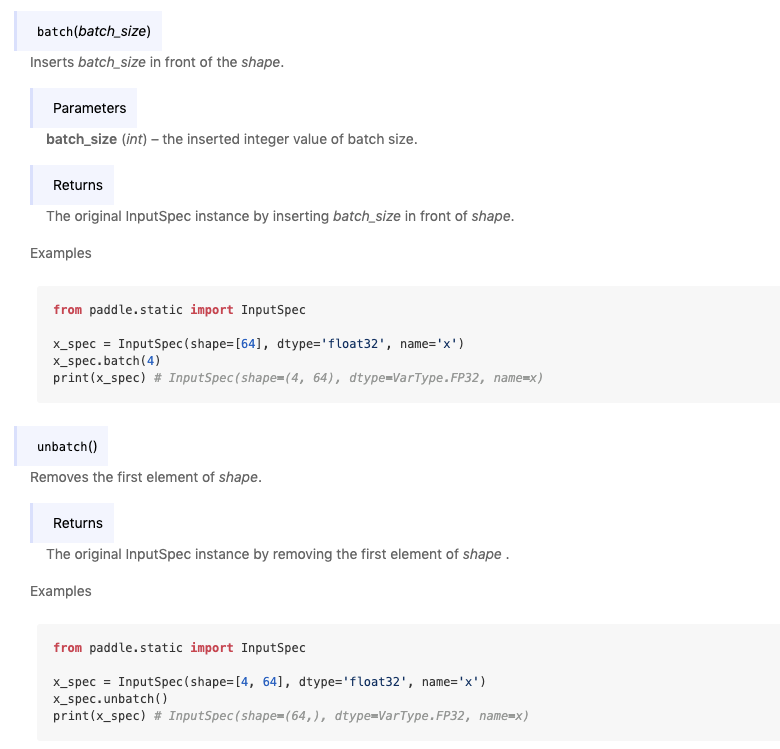

InputSpec类似于C++端的VarDesc概念,用于表示一个Tensor的元信息:shape、dtype、name。用户可以通过指定被装饰函数输入参数对应的InputSpec信息,来进行后续Program的推导。batch和unbatch操作2.3 @declarative支持

input_spec重构

@declarative逻辑,支持input_spec参数,同时也支持后续的横向功能扩展@declarative移除了无法指定额外参数的限制,可根据功能扩展参数。input_spec指定Tensor shape为None编译式静态转换,不依赖fake_input非Tensor类型参数的友好支持嵌套类型的inputTODO

jit.save接口的搭配使用,提升易用性文档: