Add conv reqantize squash#18754

Conversation

test=develop

|

@wozna Please add the |

test=develop

fc53c15 to

c486c21

Compare

|

@wojtuss Please help review this PR. |

|

I will double-check it ASAP. |

paddle/fluid/framework/ir/mkldnn/cpu_quantize_squash_pass_tester.cc

Outdated

Show resolved

Hide resolved

paddle/fluid/framework/ir/mkldnn/cpu_quantize_squash_pass_tester.cc

Outdated

Show resolved

Hide resolved

paddle/fluid/framework/ir/mkldnn/cpu_quantize_squash_pass_tester.cc

Outdated

Show resolved

Hide resolved

paddle/fluid/framework/ir/mkldnn/cpu_quantize_squash_pass_tester.cc

Outdated

Show resolved

Hide resolved

paddle/fluid/framework/ir/mkldnn/cpu_quantize_squash_pass_tester.cc

Outdated

Show resolved

Hide resolved

paddle/fluid/framework/ir/mkldnn/cpu_quantize_squash_pass_tester.cc

Outdated

Show resolved

Hide resolved

|

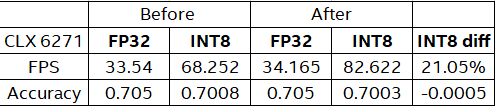

before: after: from 70.08->70.03, machie is |

test=develop

|

Based on LuoTao's benchmark, performance increase from 68.2523 to 82.6219, and the accuracy only drop from 0.7008 to 0.7003. I think this PR is good to merge. What do you think about? @wojtuss |

|

I checked it on before after |

|

Could you provide:

Besides, I run with |

|

|

I did the test in our CLX6248 with commit 233746d , and command line is: ./paddle/fluid/inference/tests/api/test_analyzer_int8_image_classification --infer_model=third_party/inference_demo/int8v2/googlenet/model --infer_data=/~/.cache/paddle/dataset/int8/download/int8_full_val.bin --batch_size=1 --paddle_num_threads=1Got the following results.

Same conclusion as luotao. @wozna wojtek is planning to investigate the server configuration problem, will figure out the reason why we got the different results. |

|

@luotao1 Please start a review. |

|

Got it. |

This squash improves the accuracy of inference on the GoogLeNet model on ImageNet data.

FP32: avg top1 accuracy: 0.7050

Using INT8 and mkldnn accuracy increases from

INT8: avg top1 accuracy: 0.7017

to

INT8: avg top1 accuracy: 0.7022

This is reopen of #18676

test=develop