refine softmax fwd on CPU#17522

Conversation

test=develop

@jczaja Do you mean update this PR? |

|

@tensor-tang Just one more question: This code is to be run for inference only eg. it is enable when PaddlePaddle for inference is build and no ValueClip is there that is needed in softmax fwd training. But performance results are shown from training which should not contain this optimization ? |

|

This is used for training actually. |

test=develop

|

http://ci.paddlepaddle.org/viewLog.html?buildId=101697&buildTypeId=Paddle_PrCi&tab=buildLog&branch_Paddle=pull%2F16684 CI failed on test_parallel_executor_seresnext |

improve 6X on v2620 v3

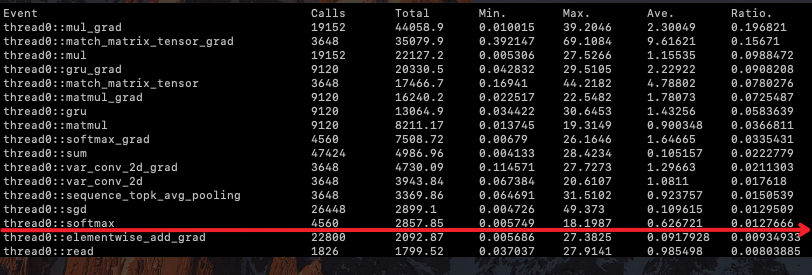

Before

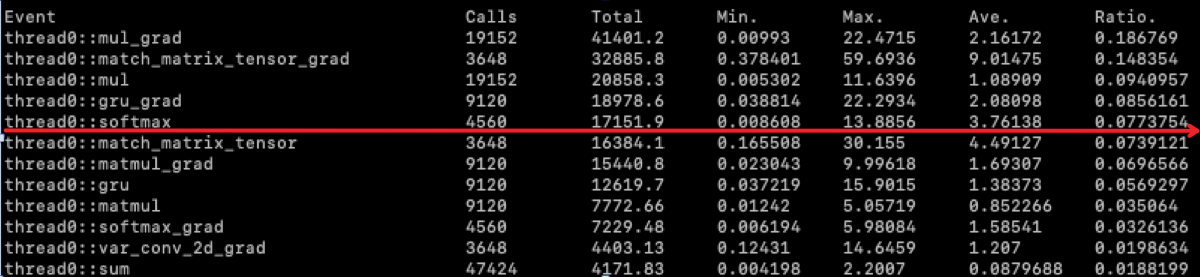

After