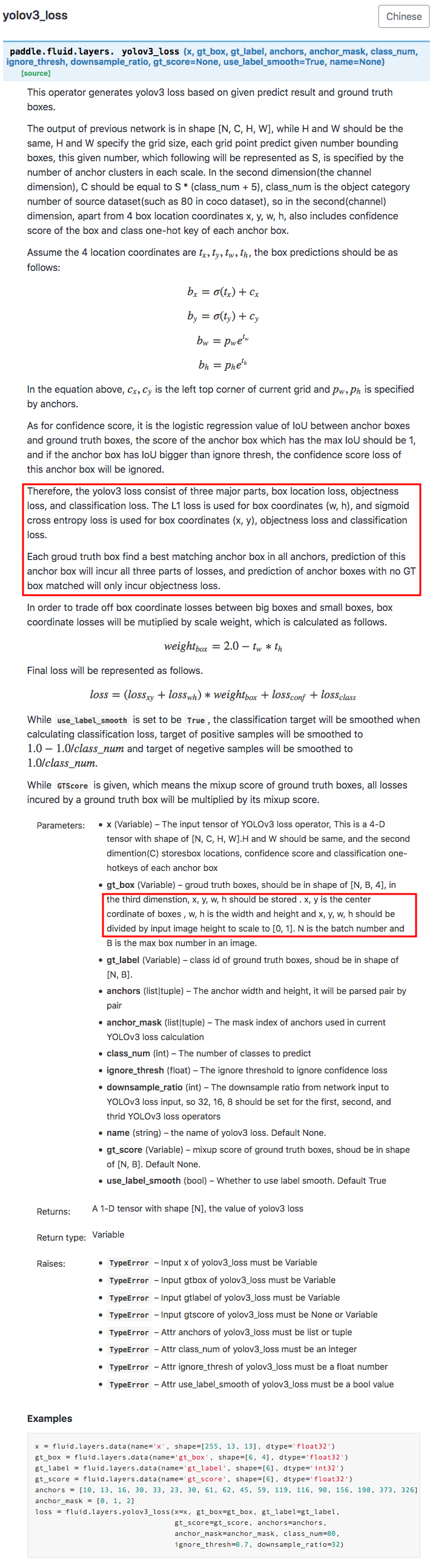

Detailed coordinate description for yolov3 loss#17007

Merged

heavengate merged 6 commits intoPaddlePaddle:developfrom Apr 26, 2019

Merged

Detailed coordinate description for yolov3 loss#17007heavengate merged 6 commits intoPaddlePaddle:developfrom

heavengate merged 6 commits intoPaddlePaddle:developfrom

Conversation

test=develop

test=develop

test=develop

Collaborator

test=develop

Contributor

Author

|

test=develop

chengduoZH

approved these changes

Apr 26, 2019

sneaxiy

pushed a commit

to sneaxiy/Paddle

that referenced

this pull request

Apr 28, 2019

# The first commit's message is: remove ut test_dist_word2vec in mac ci, will fix it in private, test=develop (PaddlePaddle#17066) # This is the 2nd commit message: Fleet unify distributed training (PaddlePaddle#16791) * implement distributed transpiler with fleet # This is the 3rd commit message: ParallelDyGraph with GPU collective mode (PaddlePaddle#16827) implement dygraph.parallel.DataParallel to hook reduce op. # This is the 4th commit message: Init mixed precision training interface (PaddlePaddle#16856) * Init mixed precision training interface * Add fp16 test script test=develop * All initializers support float16 test=develop * Code cleanup & add more code annotations test=develop * Update API spec test=develop * Add usage example in doc test=develop # This is the 5th commit message: fix reference_count_pass,test=develop (PaddlePaddle#17060) test=develop # This is the 6th commit message: Speedup roi_perspective_transform op by caching the information of linear interpolation in forward (PaddlePaddle#17090) * Cache the information of linear interpolation in forward and use it in backward. test=develop * Fix cuda kernel. test=develop # This is the 7th commit message: remove unnecessary prepare_data (PaddlePaddle#17080) test=develop # This is the 8th commit message: fix interpolate cu. test=develop (PaddlePaddle#17101) # This is the 9th commit message: test=develop, double backward leaky_relu (PaddlePaddle#17067) backward of backward: leaky_relu # This is the 10th commit message: fix fuse optimizer ops (PaddlePaddle#17102) test=develop # This is the 11th commit message: truncated_gaussian_random supported in distributed training, test=develop (PaddlePaddle#17091) # This is the 12th commit message: Detailed coordinate description for yolov3 loss (PaddlePaddle#17007) * Detailed coordinate description for yolov3 loss test=develop * modified api.spec test=develop * modified loss name * fix api.spec test=develop * polish description test=develop * modified api.spec test=develop # This is the 13th commit message: fix test_weight_decay (PaddlePaddle#17109) test=develop # This is the 14th commit message: Path flag (PaddlePaddle#17105) * fix python/paddle/fluid/__init__.py detecting problems

sneaxiy

added a commit

that referenced

this pull request

Apr 28, 2019

* refine_dropout_mem,test=develop * # This is a combination of 14 commits. # The first commit's message is: remove ut test_dist_word2vec in mac ci, will fix it in private, test=develop (#17066) # This is the 2nd commit message: Fleet unify distributed training (#16791) * implement distributed transpiler with fleet # This is the 3rd commit message: ParallelDyGraph with GPU collective mode (#16827) implement dygraph.parallel.DataParallel to hook reduce op. # This is the 4th commit message: Init mixed precision training interface (#16856) * Init mixed precision training interface * Add fp16 test script test=develop * All initializers support float16 test=develop * Code cleanup & add more code annotations test=develop * Update API spec test=develop * Add usage example in doc test=develop # This is the 5th commit message: fix reference_count_pass,test=develop (#17060) test=develop # This is the 6th commit message: Speedup roi_perspective_transform op by caching the information of linear interpolation in forward (#17090) * Cache the information of linear interpolation in forward and use it in backward. test=develop * Fix cuda kernel. test=develop # This is the 7th commit message: remove unnecessary prepare_data (#17080) test=develop # This is the 8th commit message: fix interpolate cu. test=develop (#17101) # This is the 9th commit message: test=develop, double backward leaky_relu (#17067) backward of backward: leaky_relu # This is the 10th commit message: fix fuse optimizer ops (#17102) test=develop # This is the 11th commit message: truncated_gaussian_random supported in distributed training, test=develop (#17091) # This is the 12th commit message: Detailed coordinate description for yolov3 loss (#17007) * Detailed coordinate description for yolov3 loss test=develop * modified api.spec test=develop * modified loss name * fix api.spec test=develop * polish description test=develop * modified api.spec test=develop # This is the 13th commit message: fix test_weight_decay (#17109) test=develop # This is the 14th commit message: Path flag (#17105) * fix python/paddle/fluid/__init__.py detecting problems

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

预览效果:

fix #17008