Enhance gc to support deleting tensor buffer in advance#16409

Enhance gc to support deleting tensor buffer in advance#16409sneaxiy merged 7 commits intoPaddlePaddle:developfrom

Conversation

dbb80d6 to

134e9a3

Compare

refine gc code test=develop

134e9a3 to

a93a9ee

Compare

test=develop

7b675aa to

493cd06

Compare

test=develop

06b1fd6 to

adf5e09

Compare

fix ctest eager deletion disable bug test=develop

adf5e09 to

7b72c11

Compare

7b72c11 to

796cc2c

Compare

test=develop

796cc2c to

78fb3a6

Compare

| template <typename T> | ||

| struct OpInfoFiller<T, kNoNeedBufferVarsInference> { | ||

| void operator()(const char* op_type, OpInfo* info) const { | ||

| info->infer_no_need_buffer_vars_ = [](const VariableNameMap& inputs, |

There was a problem hiding this comment.

curious about when will these three parameters be used to get the NoNeedBufferVars, seems now we just return the parameters specified in the macro as an unordered_set?

There was a problem hiding this comment.

I reserve these parameters for future use. Some ops may not need some forward inputs or outputs when some attribute is true/false. For example, batch_norm_grad_op does not need Bias when use_mkldnn is false.

| static constexpr OpInfoFillType kFillType = kType; | ||

| }; | ||

|

|

||

| using OpRegistryClasses = std::tuple< // NOLINT |

There was a problem hiding this comment.

Ugly but scalable codes here. I rewrite OpInfoFillTypeID::ID() method because the character number limit is set to be 80 in a line.

| if (ctx->HasOutput(framework::GradVarName("X"))) { | ||

| auto out_dims = ctx->GetInputDim(framework::GradVarName("Out")); | ||

| ctx->SetOutputDim(framework::GradVarName("X"), out_dims); | ||

| } |

There was a problem hiding this comment.

Maybe the above code is confusing, if the ctx->HasOutput(framework::GradVarName("X") is False, there not need call AddPositionEncodingOpGrad .

There was a problem hiding this comment.

I am confused with these codes too. I just follow the original logic.

| ++iter) { | ||

| bool ok; | ||

| auto result = | ||

| ExtractComputationOpFromLastLivedVar(*iter, i, shrink_func, &ok); |

There was a problem hiding this comment.

actually I am a little bit confused about ExtractComputationOpFromLastLivedVar function. I know it want to get the last op which will use this variable, but in reference count part, do we need to care about which op generate this variable? What's the impact if we only care about the ops use this variable (the variable is used in the input part)

There was a problem hiding this comment.

ExtractComputationOpFromLastLivedVar returns (1) the last ops which read this variable if read ops exist, or (2) the last one op which writes this variables if read op does not exist. See HERE in details.

| continue; | ||

| } | ||

|

|

||

| for (auto &out_pair : op_base->Outputs()) { |

There was a problem hiding this comment.

Do we need to care about outputs here?

There was a problem hiding this comment.

Yes, we should care about outputs for in-place operation.

For example, gc can collect data buffer of input

Yinelementwise_add_gradop before this op runs. In the meanwhile, shape and lod ofYcan be kept whenelementwise_add_gradop runs.Op developers can use

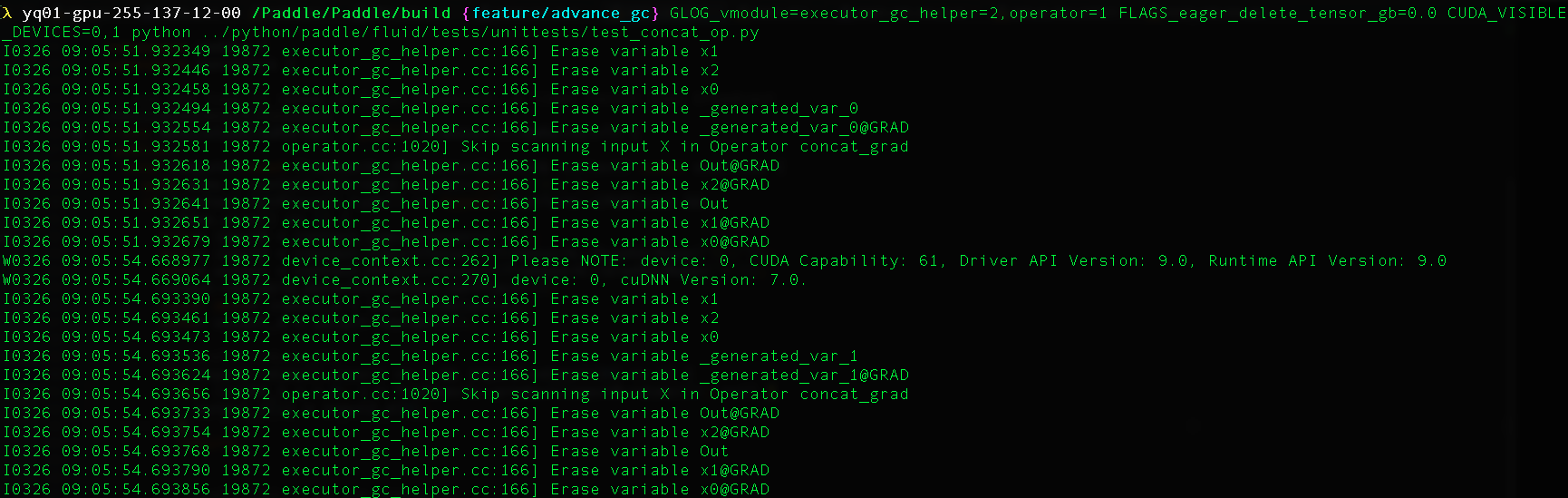

DECLARE_NO_NEED_BUFFER_VARS_INFERENCEto declare a class that indicates the unused-buffer tensors.For example, inputs of concat op

x0,x1,x2can be deleted before concat_grad op runs: