-

Notifications

You must be signed in to change notification settings - Fork 40

Arguments description #325

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Changes from 2 commits

File filter

Filter by extension

Conversations

Jump to

Diff view

Diff view

There are no files selected for viewing

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -1,5 +1,5 @@ | ||

| # DLRM | ||

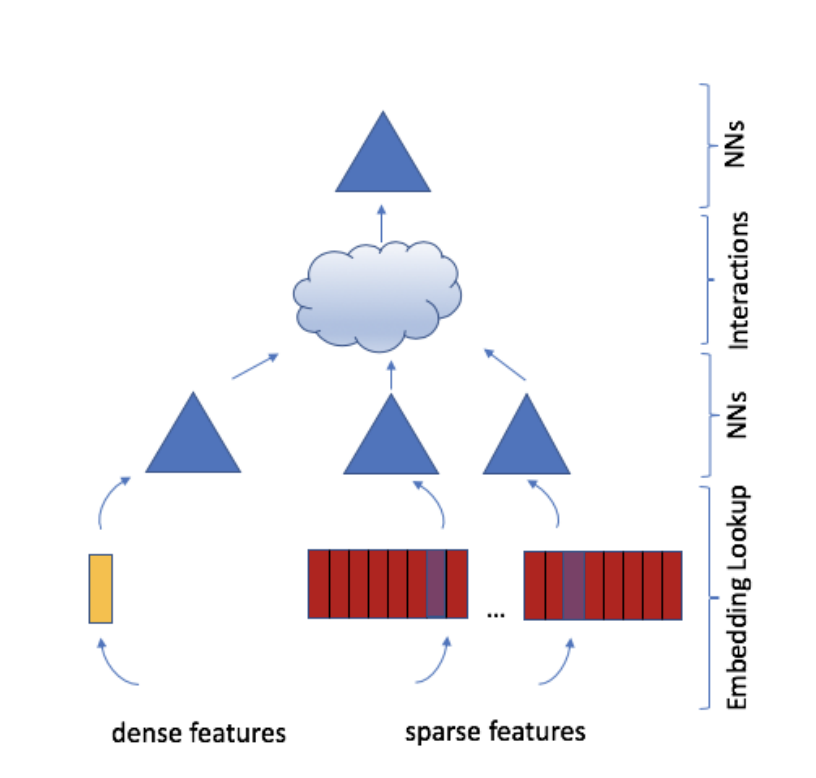

| [DLRM](https://arxiv.org/pdf/1906.00091.pdf) is a deep learning-based recommendation model that exploits categorical data model for CTR recommendation. Its model structure is as follows. Based on this structure, this project uses OneFlow distributed deep learning framework to realize training the modle in graph mode and eager mode respectively on Crioteo data set. | ||

| [DLRM](https://arxiv.org/pdf/1906.00091.pdf) is a deep learning-based recommendation model that exploits categorical data model for CTR recommendation. Its model structure is as follows. Based on this structure, this project uses OneFlow distributed deep learning framework to realize training the modle in graph mode respectively on Crioteo data set. | ||

|  | ||

|

|

||

| ## Directory description | ||

|

|

@@ -14,16 +14,41 @@ | |

| ## Arguments description | ||

| |Argument Name|Argument Explanation|Default Value| | ||

| |-----|---|------| | ||

| |batch_size|the data batch size in one step training|16384| | ||

| |data_dir|the data file directory|None| | ||

| |use_fusedmlp|use fused MLP or not|| | ||

| |embedding_vec_size||128| | ||

| |bottom_mlp||512,256,128| | ||

| |top_mlp||1024,1024,512,256| | ||

| |disable_interaction_padding|disenable interaction padding or not|| | ||

| |interaction_itself|interaction itself or not|| | ||

| |model_load_dir|model loading directory|| | ||

| |model_save_dir|model saving directory|./checkpoint| | ||

| |save_initial_model|save initial model parameters or not.|| | ||

| |save_model_after_each_eval|save model after each eval|| | ||

| |not_eval_after_training|do eval after_training|| | ||

| |data_dir|the data file directory|/dataset/dlrm_parquet| | ||

| |eval_batchs|<0: whole val ds, 0: do not val, >0: number of eval batches|-1| | ||

|

||

| |eval_batch_size||55296| | ||

| |eval_batch_size_per_proc||None| | ||

|

||

| |eval_interval||10000| | ||

| |batch_size|the data batch size in one step training|55296| | ||

|

||

| |batch_size_per_proc||None| | ||

|

||

| |learning_rate|argument learning rate|24| | ||

| |warmup_batches||2750| | ||

| |decay_batches||27772| | ||

| |decay_start||49315| | ||

| |max_iter|maximum number of training batch times|75000| | ||

| |loss_print_every_n_iter|print train loss and validate the model after training every number of batche times|100| | ||

| |column_size_array|column_size_array|| | ||

| |persistent_path|path for persistent kv store|| | ||

| |store_type||| | ||

| |device_memory_budget_mb_per_rank||8192| | ||

|

||

| |use_fp16|Run model with amp|| | ||

|

||

| |loss_scale_policy|static or dynamic|static| | ||

| |model_load_dir|model loading directory|None| | ||

| |model_save_dir|model saving directory|None| | ||

| |loss_print_interval|print train loss and validate the model after training every number of batche times|1000| | ||

| |save_initial_model|save the initial arguments of the modelor not|False| | ||

|

|

||

| - [ ] TODO: other parameters | ||

|

|

||

| ## Prepare running | ||

| ### Environment | ||

|

|

||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

remove this