Merged

Conversation

HiHippie

commented

Jun 27, 2022

Ldpe2G

reviewed

Jun 28, 2022

HiHippie

commented

Jun 28, 2022

tests/test_scheduler.py

Outdated

| def _get_exponential_lr(base_lr, gamma, max_iters, warmup_iters): | ||

| valid_values = [] | ||

| for idx in range(max_iters - warmup_iters): | ||

| for idx in range(warmup_iters, max_iters+1): |

Contributor

Author

Collaborator

There was a problem hiding this comment.

感觉应该是对的,这里是收集从warmup_iters到max_iter之间的lr

HiHippie

commented

Jun 28, 2022

tests/test_scheduler.py

Outdated

| sched.step() | ||

| lrs.append(opt.param_groups[0]["lr"]) | ||

| self.assertTrue(np.allclose(lrs[:5], [0.005, 1.004, 2.003, 3.002, 4.001])) | ||

| self.assertTrue(np.allclose(lrs[:5], [0.005, 0.00401, 0.0030199999999999997, 0.00203, 0.0010399999999999997])) |

Contributor

Author

There was a problem hiding this comment.

这里不通过是因为这里的warmup中lr是下降的

Collaborator

There was a problem hiding this comment.

warmup 时候 lr 是下降的?这个是正常行为么?

Contributor

Author

There was a problem hiding this comment.

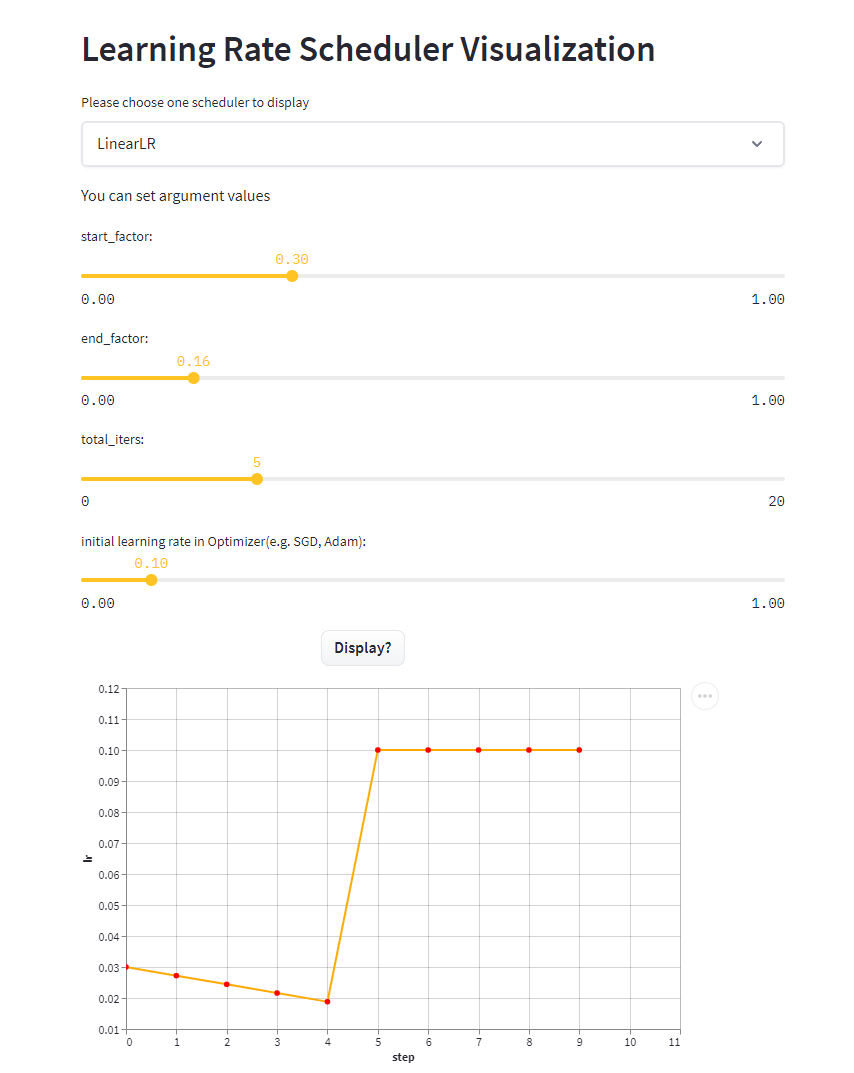

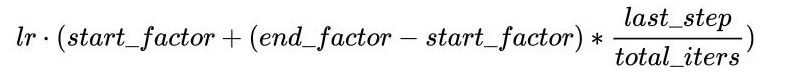

应该是正常行为,end_factor < start_factor时候就是下降的

这段代码调用test_warmup_exponential更新的lr计算end_factor,导致其变小。

Ldpe2G

approved these changes

Jun 29, 2022

CPFLAME

approved these changes

Jun 29, 2022

HiHippie

commented

Jun 29, 2022

libai/scheduler/lr_scheduler.py

Outdated

| """ | ||

| polynomial_lr = flow.optim.lr_scheduler.PolynomialLR( | ||

| optimizer, steps=max_iter, end_learning_rate=end_learning_rate, power=power, cycle=cycle | ||

| optimizer, decay_batch=max_iter, end_learning_rate=end_learning_rate, power=power, cycle=cycle |

Contributor

Author

There was a problem hiding this comment.

因为我的oneflow版本不是最新的,所以本地测试通过,但准备merge的时候发现这里的key_arg有变动。

参考 Oneflow-Inc/models#349 这个pr

所以这里同步做了修改

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

该pr为LiBai添加WarmupStepLR支持,以及修复test_warmup_exponential测试代码error

Auto-scaling the config to train.train_iter=100, train.warmup_iter=2 step_size=10