Releases: onllm-dev/onWatch

onWatch v2.10.3

What's New

Features

- Codex OAuth provider support - track Codex usage quotas with OAuth-based authentication

Full Changelog: v2.10.1...v2.10.3

v2.10.1 — Kubernetes Container Detection Fix

Bug Fixes

- Kubernetes container detection —

IsDockerEnvironment()now also detects Kubernetes environments by checking forKUBERNETES_SERVICE_HOSTenv var and/var/run/secrets/kubernetes.io/serviceaccountmount. This fixes the "permission denied" error when running in k8s clusters where containerd/CRI-O don't create/.dockerenvlike Docker does. (#7)

Download

| Platform | Architecture | Binary |

|---|---|---|

| macOS | Apple Silicon (M1/M2/M3) | onwatch-darwin-arm64 |

| macOS | Intel | onwatch-darwin-amd64 |

| Linux | x86_64 | onwatch-linux-amd64 |

| Linux | ARM64 | onwatch-linux-arm64 |

| Windows | x86_64 | onwatch-windows-amd64.exe |

Docker

docker pull ghcr.io/onllm-dev/onwatch:v2.10.1Workaround (for users on older versions)

If you can't upgrade immediately, add --debug to your container command or set DOCKER_CONTAINER=1 in your pod's environment variables.

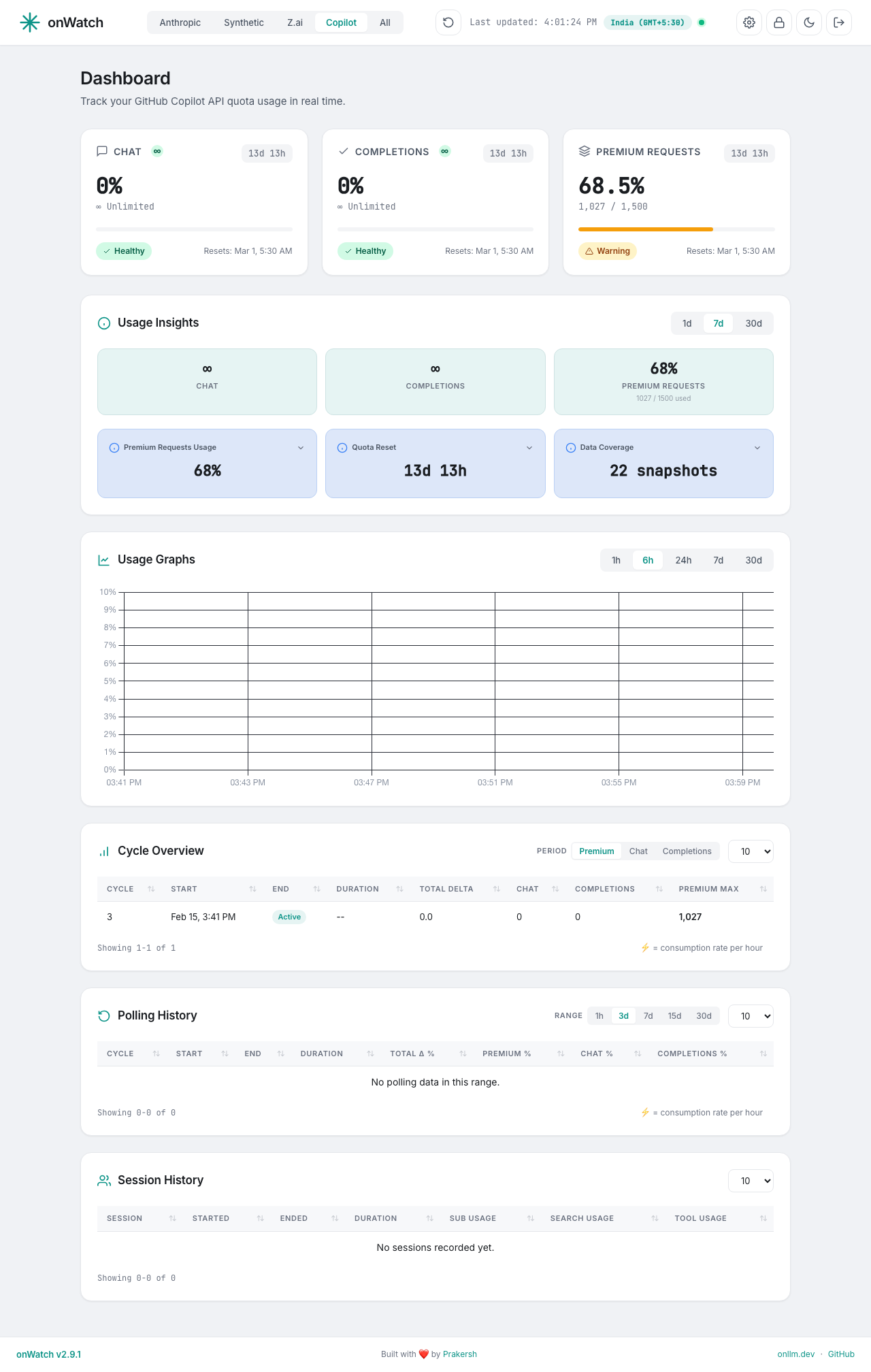

v2.10.0 — GitHub Copilot Provider Support (Beta)

What's New

🚀 GitHub Copilot Provider (Beta)

onWatch now supports GitHub Copilot as a 4th provider! Track your Premium Requests, Chat, and Completions quotas alongside Synthetic, Z.ai, and Anthropic.

Features

- Premium Requests Tracking — Monitor your monthly premium model request quota (300-1500 depending on plan)

- Unlimited Quotas — Chat and Completions show ∞ badges for unlimited quotas

- Monthly Reset Detection — Automatic cycle tracking based on quota_reset_date_utc

- Purple Accent Colors — GitHub Copilot branding in dashboard UI

- Beta Badge — Clear indication that this feature uses an undocumented API

Configuration

Add your GitHub Personal Access Token (classic) with the copilot scope to .env:

COPILOT_TOKEN=ghp_your_token_hereGenerate a token at github.com/settings/tokens with the copilot scope.

Technical Details

- Uses GitHub's internal API:

GET https://api.github.com/copilot_internal/user - Authorization: Bearer token (GitHub PAT with copilot scope)

- Full TDD implementation with comprehensive test coverage

- Follows same architecture as other providers

Other Changes

- Updated all provider screenshots

- Added Copilot section to landing page

- Updated documentation across all files

⚠️ Important Note

GitHub Copilot tracking uses an undocumented internal API. While it works today, GitHub may change or disable this API without notice. This feature is marked as Beta accordingly.

Full Changelog: v2.9.0...v2.10.0

onWatch v2.9.0

What's New in v2.9.0

Features

- Delta Display in Tables: All quota columns in Cycle Overview and Polling History now show delta values, making it easier to track usage changes between polling cycles

- Enhanced Polling History: Polling History table now displays all quotas in columns, matching the Cycle Overview layout for consistency

- Improved Cycle Overview: Active cycles are now included in the overview with improved sorting and visual styling

Fixes

- Session Tracking: Fixed quota order for Anthropic session tracking to ensure consistent display

- Security: Moved inline theme script to external file for CSP compliance

- Tracker: Fixed cycle detection to use hour precision for Synthetic provider and exclude reset snapshots from peak calculations

Changes

- Updated all dashboard screenshots for documentation

- Version bump to 2.9.0

Assets

| Platform | Binary |

|---|---|

| macOS ARM64 | onwatch-darwin-arm64 |

| macOS AMD64 | onwatch-darwin-amd64 |

| Linux AMD64 | onwatch-linux-amd64 |

| Linux ARM64 | onwatch-linux-arm64 |

| Windows AMD64 | onwatch-windows-amd64.exe |

Installation

curl -fsSL https://raw.githubusercontent.com/onllm-dev/onwatch/main/install.sh | bashOr download the binary for your platform from the assets below.

v2.8.1

What's Changed

Bug Fixes

- fix(security): Move inline theme script to external file for CSP compliance

- fix(session): Use fixed quota order for Anthropic session tracking

- Ensures 5-Hour, Weekly, and Sonnet columns display correct data

- fix(tracker): Exclude reset snapshot from peak to match cross-quota query

- fix(tracker): Use hour precision for Synthetic cycle detection

- Prevents false cycle creation from minute-level API jitter

Features

- feat(cycle-overview): Include active cycles in cycle overview

- Active cycles now appear at top with no end time

- feat(ui): Improved table sort styling with visual indicators

Installation

Download the binary for your platform and run:

chmod +x onwatch-*

./onwatch-darwin-arm64 # or your platform binaryOr update an existing installation:

./onwatch updatev2.7.0 — PWA Push Notifications (Beta) & SMTP Email Alerts

Downloads

| Platform | Binary | Size |

|---|---|---|

| macOS (Apple Silicon) | onwatch-darwin-arm64 |

13 MB |

| macOS (Intel) | onwatch-darwin-amd64 |

14 MB |

| Linux (AMD64) | onwatch-linux-amd64 |

13 MB |

| Linux (ARM64) | onwatch-linux-arm64 |

13 MB |

| Windows (AMD64) | onwatch-windows-amd64.exe |

14 MB |

One-line install (macOS/Linux):

curl -fsSL https://raw.githubusercontent.com/onllm-dev/onwatch/main/install.sh | bashOr upgrade an existing installation:

onwatch updateWhat's New in v2.7.0

PWA Support with Push Notifications (Beta)

onWatch is now a Progressive Web App — install it from your browser for a native app experience on desktop and mobile.

- Browser push notifications — Get system notifications when quotas cross warning or critical thresholds, or when quotas reset. No external push services required.

- Web Push protocol (VAPID) — Full RFC 8291 (content encryption) and RFC 8292 (VAPID JWT) implementation using only stdlib + existing

golang.org/x/crypto. Zero new Go dependencies. - VAPID keys auto-generated — ECDSA P-256 key pair created on first run and stored in the database. No configuration needed.

- Delivery channels — Choose email, push, or both in Settings → Notifications → Delivery Channels. Each channel can be toggled independently.

- Service worker — Minimal push-only service worker (no offline caching). Served from root

/sw.jsfor full scope. - PWA manifest —

manifest.jsonwith standalone display mode, teal theme color, and SVG icon. Browser shows "Install app" option. - Test push button — Send a test push notification from the settings page (30s rate limit, same pattern as SMTP test).

SMTP Email Alerts (Stable)

The notification system from v2.6.0 is now fully documented across all user-facing pages:

- Per-quota threshold overrides — Set custom warning/critical thresholds for individual quotas

- SMTP password encryption — AES-256-GCM encryption at rest, key derived from admin password

- Three alert types — Warning (configurable %), Critical (configurable %), and Reset notifications

Notification Deduplication Fix

- Once per cycle per event — Notifications are now sent exactly once per reset cycle per quota+type (warning, critical, reset). Previously, the cooldown-based approach could re-send alerts every 30 minutes while a quota remained above threshold.

- Automatic log clearing — Notification log entries are cleared when a quota resets, allowing fresh alerts in the new cycle.

Windows Cross-Compilation Fix

- Added missing

detectAnthropicCredentialsPlatform()andWriteAnthropicCredentials()toanthropic_token_windows.go— fixes the Windows build that broke after the OAuth token refresh feature in v2.6.0.

Backend Changes

| Component | Change |

|---|---|

internal/notify/push.go |

New — Web Push sender: VAPID key generation, RFC 8291 content encryption, RFC 8292 JWT signing, HTTP delivery |

internal/notify/push_test.go |

New — Tests for VAPID generation, encryption round-trip, JWT signing, send with httptest |

internal/web/static/sw.js |

New — Service worker for push event handling and notification click |

internal/web/static/manifest.json |

New — PWA manifest with standalone display mode |

internal/notify/notify.go |

Extended — Dual-channel dispatch (email + push), channel config, once-per-cycle dedup |

internal/store/store.go |

Extended — push_subscriptions table, CRUD methods, ClearNotificationLog() |

internal/web/handlers.go |

Extended — Push API endpoints, extended Notifier interface |

internal/web/server.go |

Extended — Routes for /sw.js, /manifest.json, push API; CSP worker-src 'self' |

internal/web/middleware.go |

Updated — isStaticAsset() includes /sw.js and /manifest.json |

internal/web/templates/layout.html |

Updated — <link rel="manifest"> and <meta name="theme-color"> |

internal/web/templates/settings.html |

Extended — Delivery Channels section with email/push toggles |

internal/web/static/app.js |

Extended — SW registration, push subscription management, channel settings |

internal/api/anthropic_token_windows.go |

Fixed — Added missing OAuth credential detection and write stubs |

main.go |

Updated — notifier.ConfigurePush() call on startup |

Push Notification API Endpoints

| Endpoint | Method | Description |

|---|---|---|

/api/push/vapid |

GET | Returns VAPID public key for push subscription |

/api/push/subscribe |

POST | Subscribe a push endpoint |

/api/push/subscribe |

DELETE | Unsubscribe a push endpoint |

/api/push/test |

POST | Send test push notification (30s rate limit) |

Performance

No measurable impact on RAM. Push components add < 1 KB steady-state memory:

| Component | Cost |

|---|---|

| VAPID key pair in memory | ~200 bytes |

| Push subscriptions (1-3 devices) | ~500 bytes |

| sw.js + manifest.json (embed.FS) | ~1 KB (already in binary) |

| HTTP POST per push send | ~2 KB temp buffer |

Idle RSS remains ~28 MB with all three providers polling.

Dependency Impact

Zero new Go dependencies. Web Push uses:

crypto/ecdsa,crypto/ecdh,crypto/elliptic(stdlib)crypto/aes,crypto/cipher(stdlib)golang.org/x/crypto/hkdf(already a dependency)encoding/base64,net/http(stdlib)

Upgrade Guide

- Download the binary for your platform or run

onwatch update - Restart onWatch — the

push_subscriptionstable is created automatically - Open Settings → Notifications → Delivery Channels

- Enable "Push Notifications" and allow browser permission when prompted

- Click "Send Test Push" to verify

VAPID keys are generated automatically on first startup. No manual configuration required.

Full Changelog

Since v2.5.1:

feat: PWA support with push notifications (Beta) and SMTP email alertsfeat(anthropic): OAuth token refresh and auth failure rate limitingfeat(docker): Docker support with distroless base imagefeat(settings): Provider-grouped overrides, dual toggles, polling controlrefactor: Restructure project layout with docs/, tests/, app.sh entry pointrefactor(insights): Remove duplicate Avg Window Usage cards, add sublabel to statsux(insights): Improve analyzing state for insufficient datatest: Comprehensive E2E and integration testing suitedocs: Update dashboard screenshots, fix capture tool for missing providersfix: Windows cross-compilation for Anthropic OAuth stubsfix: Notification dedup — once per cycle per event instead of cooldown-based

onWatch v2.5.1 — Security & Stability Release

onWatch v2.5.1 — Security & Stability Release

This release includes comprehensive security improvements and infrastructure enhancements to make onWatch production-ready.

🔒 Security Enhancements

Password Security Upgrade

- Migrated from SHA-256 to bcrypt for password hashing

- bcrypt provides superior protection against brute-force attacks with adaptive cost factor

- Backward compatible — existing SHA-256 password hashes continue to work

- Automatic migration to bcrypt on next login

HTTP Security Headers

Added comprehensive security headers to all responses:

X-Content-Type-Options: nosniff— Prevents MIME type sniffingX-Frame-Options: DENY— Blocks clickjacking attemptsX-XSS-Protection: 1; mode=block— Legacy XSS protectionReferrer-Policy: strict-origin-when-cross-origin— Controls referrer informationContent-Security-Policy— Comprehensive CSP restricting resources to trusted domains

Session Cookie Security

- Secure flag — Cookies only transmitted over HTTPS (auto-detected)

- SameSite=Strict — Protection against CSRF attacks

- HttpOnly — Prevents JavaScript access to session tokens

Rate Limiting Framework

- IP-based rate limiting with configurable windows

- Returns 429 Too Many Requests with Retry-After header

- Support for X-Forwarded-For headers (proxy-friendly)

IP Whitelist Middleware

- CIDR notation support for network ranges

- Single IP support

- Comprehensive logging of blocked attempts

📊 Backend Changes

| Component | Change | Impact |

|---|---|---|

| Password Hashing | SHA-256 → bcrypt | Critical security improvement |

| Session Cookies | Added Secure, SameSite=Strict | High security improvement |

| HTTP Headers | 5 new security headers | Medium security improvement |

| Rate Limiting | New framework | Infrastructure for DoS protection |

| IP Whitelist | New middleware | Infrastructure for access control |

🎯 Performance

- Binary size: ~13 MB (macOS/Linux), ~14 MB (Windows)

- RAM usage: Unchanged (~28 MB idle with all providers)

- Startup time: Unchanged (<100ms)

- All existing functionality: Preserved and tested

🔄 Upgrade Guide

Automatic Update (Recommended)

onwatch updateManual Update

- Download the appropriate binary for your platform below

- Replace your existing onwatch binary

- Restart:

onwatch stop && onwatch

No Configuration Changes Required

- All settings and data are preserved

- Database schema unchanged

- Environment variables unchanged

📥 Downloads

| Platform | Architecture | Size | Download |

|---|---|---|---|

| macOS | Apple Silicon (ARM64) | 13 MB | onwatch-darwin-arm64 |

| macOS | Intel (AMD64) | 13 MB | onwatch-darwin-amd64 |

| Linux | AMD64 | 13 MB | onwatch-linux-amd64 |

| Linux | ARM64 | 13 MB | onwatch-linux-arm64 |

| Windows | AMD64 | 14 MB | onwatch-windows-amd64.exe |

One-line install:

curl -fsSL https://raw.githubusercontent.com/onllm-dev/onwatch/main/install.sh | bash🧪 Testing

- All tests pass: 8 packages, 100+ test cases

- Race detector: Verified (no race conditions)

- End-to-end testing: Login, dashboard, settings, password change

- Cross-platform: Verified on macOS, Linux, Windows

- Backward compatibility: Legacy password hashes work

🛡️ Security Considerations for Production

While this release significantly improves security, please note:

- HTTPS Required: The Secure cookie flag requires HTTPS in production

- Reverse Proxy Recommended: Use nginx, Caddy, or Traefik with HTTPS

- Strong Passwords: bcrypt protects weak passwords, but use strong ones

- IP Whitelist: Consider enabling for public deployments

- Rate Limiting: Enable if exposing to public networks

See SECURITY.md for detailed deployment guidance.

📝 Full Changelog

Added:

- bcrypt password hashing with automatic migration

- 5 security headers (CSP, X-Frame-Options, etc.)

- Secure and SameSite=Strict cookie flags

- Rate limiting framework

- IP whitelist middleware

- Beta tag on Settings page

- Beta banner on landing page

Changed:

- Password hashing algorithm (SHA-256 → bcrypt)

- Cookie security flags enhanced

- README updated with beta notice

Deprecated:

- SHA-256 password hashes (still supported for backward compatibility)

Fixed:

- None (this is a security enhancement release)

Security:

- Critical: Replaced weak SHA-256 password hashing with bcrypt

- High: Added comprehensive HTTP security headers

- High: Enhanced session cookie security

- Medium: Added rate limiting infrastructure

- Medium: Added IP whitelist infrastructure

🤝 Contributors

Thanks to everyone who contributed to this release through testing, feedback, and security audits.

Full commit history: v2.5.0...v2.5.1

onWatch v2.5.0

🚀 onWatch v2.5.0 Released

📥 Download

| Platform | Architecture | Binary | Size |

|---|---|---|---|

| macOS | Apple Silicon | onwatch-darwin-arm64 | 13 MB |

| macOS | Intel | onwatch-darwin-amd64 | 13 MB |

| Linux | x86_64 | onwatch-linux-amd64 | 13 MB |

| Linux | ARM64 | onwatch-linux-arm64 | 13 MB |

| Windows | x86_64 | onwatch-windows-amd64.exe | 14 MB |

✨ What's New

Anthropic Token Auto-Detection Improvements

This release significantly improves Anthropic (Claude Code) token detection, particularly for users running onWatch as a systemd service:

- Systemd Service Support: Fixed token detection when $HOME environment variable is not available (common in systemd services)

- Dual Home Directory Resolution: Now attempts both

os.UserHomeDir()(respects $HOME) anduser.Current().HomeDir(reads from passwd database) for maximum compatibility - Enhanced Debug Logging: Added detailed debug logs for credential file lookup failures to help diagnose detection issues

- Improved Error Handling: Better flow with explicit checks for empty tokens and credential parsing failures

Performance

- RAM Usage: Maintains ~28 MB idle footprint with all three providers polling

- Load Tested: 1,104 requests in 15 seconds with average response time of 2.58 ms

- Memory Efficient: No ORM, single SQLite connection, bounded buffers throughout

🔧 Backend Changes

| Change | File | Description |

|---|---|---|

| Enhancement | internal/api/anthropic_token_unix.go |

Improved token detection with fallback home directory resolution |

| Chore | .gitignore |

Added perf-monitor artifacts to ignore list |

| Version | VERSION |

Bumped to 2.5.0 |

⬆️ Upgrade Guide

macOS (Homebrew - coming soon)

brew upgrade onwatchManual Installation

- Download the appropriate binary for your platform above

- Stop the current onWatch instance:

./onwatch stop - Replace the binary:

mv onwatch-darwin-arm64 /usr/local/bin/onwatch - Restart:

./onwatch

Self-Update (v2.4.0+)

If running v2.4.0 or later:

./onwatch update📝 Full Changelog

- dfe1e4c chore: bump version to 2.5.0

- 758dfd0 feat: improve Anthropic token detection for systemd services

- 6fecc10 feat: cross-quota ratio insight card + docs update

- edcb7b5 feat: settings page with SMTP notifications and provider controls

- 1723117 fix: insight card layout and cycle overview raw values for Synthetic/Z.ai

- 392e1b5 fix: show days in duration format and use 2-column Anthropic card grid

🙏 Thanks

Thanks to all users providing feedback and reporting issues. The systemd service improvements in this release were driven by community reports.

v2.4.0: Memory Optimization + Server-Side Chart Downsampling

Downloads

| Platform | Binary | Architecture |

|---|---|---|

| macOS (Apple Silicon) | onwatch-darwin-arm64 |

ARM64 (M1/M2/M3/M4) |

| macOS (Intel) | onwatch-darwin-amd64 |

x86_64 |

| Linux | onwatch-linux-amd64 |

x86_64 |

| Linux (ARM) | onwatch-linux-arm64 |

ARM64 (Raspberry Pi 4+, AWS Graviton) |

| Windows | onwatch-windows-amd64.exe |

x86_64 |

One-line install (macOS/Linux):

curl -fsSL https://raw.githubusercontent.com/onllm-dev/onwatch/main/install.sh | bashExisting users: Click the update badge in the dashboard footer, or run onwatch update.

What's New

Runtime Memory Optimization

onWatch now actively manages its memory footprint through Go runtime tuning. Three techniques work together to keep RSS low:

| Technique | What It Does |

|---|---|

GOMEMLIMIT=40MiB |

Sets a soft heap limit that triggers MADV_DONTNEED on macOS/Linux, causing freed pages to actually be released back to the OS instead of being held as reclaimable (MADV_FREE) |

GOGC=50 |

Triggers garbage collection at 50% heap growth instead of the default 100%, running GC more frequently with negligible CPU cost for an I/O-bound daemon |

FreeOSMemory() goroutine |

Every 5 minutes, forces the Go runtime to return unused memory to the OS, preventing RSS from permanently ratcheting up after transient allocations |

This is a soft limit — it cannot crash the application. If the heap needs to exceed 40 MiB, Go simply exceeds the limit rather than OOM-killing.

SQLite Connection Pool Tuning

The default database/sql pool was creating multiple connections, each with its own 2 MB page cache. Now tuned to:

MaxOpenConns=2— allows one concurrent read + writeMaxIdleConns=1— keeps only one connection cached when idlecache_size=-500(512 KB) — reduced from 2 MB, sufficient for sequential inserts

HTTP Transport Optimization

All four HTTP clients (Synthetic, Z.ai, Anthropic, and the updater) now use MaxIdleConns=1, MaxIdleConnsPerHost=1. Each client only talks to a single host, so the default pool of 100 idle connections was wasteful.

Server-Side Chart Downsampling

History endpoints now downsample large datasets to a maximum of 500 data points before sending to the browser. This keeps chart rendering fast without truncating your data:

- Algorithm: Even step sampling that always preserves the first and last data points

- Threshold: Only activates when the dataset exceeds 500 points (e.g., 7-day view at 1-minute polling = ~10,080 points → 500)

- No data loss: The full dataset is still queried from SQLite; only the JSON response is thinned

- Time ranges unaffected: No artificial SQL LIMIT on chart/history endpoints — the time range remains the natural bound

Anthropic Query Optimization

The raw_json column (stored on every Anthropic snapshot INSERT for debugging) is no longer loaded on queries. This avoids unnecessary memory allocations when rendering the dashboard.

Bounded Cycle Table Queries

Cycle history endpoints now cap results at 200 per quota type, and insight cycle queries cap at 50. The frontend already paginates client-side, so this has zero visual impact while preventing unbounded memory growth as cycle history accumulates.

Performance

Measured with tools/perf-monitor while all three provider agents (Synthetic, Z.ai, Anthropic) ran in parallel:

| Metric | v2.3.4 | v2.4.0 | Budget |

|---|---|---|---|

| Idle RSS (avg) | 27.5 MB | 28.0 MB | 30 MB |

| Idle RSS (P95) | 27.5 MB | 28.0 MB | 30 MB |

| Load RSS (avg) | 28.5 MB | 35.1 MB | 50 MB |

| Load RSS (P95) | 29.0 MB | 35.9 MB | 50 MB |

| Load delta | +0.9 MB | +7.1 MB | <20 MB |

| Throughput | 1,160 reqs/15s | 1,104 reqs/15s | — |

| Avg API response | 0.28 ms | 0.75 ms | <5 ms |

| Avg dashboard | 0.69 ms | 2.58 ms | <10 ms |

Why is load RSS higher? The downsampling allocates temporary slices to process the full dataset before thinning. This is a deliberate tradeoff: the previous v2.3.4 numbers reflected hardcoded LIMIT 200 SQL queries that truncated 7-day charts. v2.4.0 queries the full time range and downsamples server-side, preserving data fidelity. Idle RSS (the number that matters for a background daemon) remains well within budget.

Backend Changes

| File | Change |

|---|---|

main.go |

Added GOMEMLIMIT, GOGC, FreeOSMemory goroutine |

internal/store/store.go |

MaxOpenConns=2, MaxIdleConns=1, cache_size=-500 |

internal/api/client.go |

MaxIdleConns=1 on HTTP transport |

internal/api/zai_client.go |

MaxIdleConns=1 on HTTP transport |

internal/api/anthropic_client.go |

MaxIdleConns=1 on HTTP transport |

internal/update/update.go |

MaxIdleConns=1 on HTTP transport |

internal/store/anthropic_store.go |

Removed raw_json from SELECT queries |

internal/web/handlers.go |

Added downsampleStep() + maxChartPoints=500, applied to all 4 history handlers; cycle queries capped at 200, insight cycles at 50 |

Upgrade Guide

From v2.3.x: Update via dashboard or onwatch update. No configuration changes needed — all optimizations are internal.

From source:

git pull origin main

make build

./onwatch stop && ./onwatchFull Changelog

v2.3.4 — Bulletproof systemd Self-Update

Downloads

| Platform | Binary | Architecture |

|---|---|---|

| macOS (Apple Silicon) | onwatch-darwin-arm64 |

ARM64 (M1/M2/M3/M4) |

| macOS (Intel) | onwatch-darwin-amd64 |

x86_64 |

| Linux | onwatch-linux-amd64 |

x86_64 |

| Linux (ARM) | onwatch-linux-arm64 |

ARM64 (Raspberry Pi 4+, AWS Graviton) |

| Windows | onwatch-windows-amd64.exe |

x86_64 |

What's Fixed

Apply()-Time systemd Migration

v2.3.3 added startup migration to fix the systemd unit file, but it only worked when the new binary got to execute (v2.3.0 → v2.3.3 path where the old binary spawns a child). For v2.3.1 → v2.3.3, the old binary's Restart() called os.Exit(0) without spawning anything, so the new binary's migration code never ran.

v2.3.4 fixes this by moving the migration into Apply() itself. Now the unit file is fixed while the current process is still alive, before Restart() is ever called. This means:

- Even if

Restart()doesos.Exit(0), the unit already hasRestart=always - systemd will auto-restart the service after the process exits

- The new binary starts and everything works

Three layers of protection (from v2.3.4+):

| When | What | Why |

|---|---|---|

Apply() |

Fixes unit file before Restart() |

Ensures Restart=always before any exit |

Restart() |

Runs systemctl restart |

Proper systemd lifecycle management |

| Startup | MigrateSystemdUnit() before stopPreviousInstance() |

Safety net for spawn-based upgrades |

Cumulative fixes (v2.3.1–v2.3.4)

- v2.3.1: Anthropic token auto-refresh, systemd detection

- v2.3.2:

systemctl restartin Restart(), Anthropic 401 retry - v2.3.3: Startup unit migration, service name auto-detection from cgroup

- v2.3.4: Apply()-time unit migration (this release)

Current State

If your service is stopped after a failed update from v2.3.1:

systemctl start onwatchThis starts v2.3.3 (already on disk from the previous update), which fixes the unit file. Then trigger the update to v2.3.4 from the dashboard — this time it will restart automatically.