-

Notifications

You must be signed in to change notification settings - Fork 608

Middleware tracing: add support for streaming responses #302

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

567be53 to

c225bed

Compare

When tracing a request, Newrelic should not stop the transaction if the response is lazy-evaluated (e.g. Streaming). Rack's Body response can render streaming responses by wiring an Enumerator into `@body`. The server that writes to the client then calls response.each which iterates over each part of the body (parts can be lazy-generated). We are interested in tracing this code because DB queries, http requests or other business logic may be running in this Enumerator.

c225bed to

209f49a

Compare

|

Hello @sdemjanenko, thanks for the contribution! I'll pull the code down and give it a thorough review. In the meantime, it appears that this change breaks quite a few tests on certain versions of ruby with certain versions of Rails. It would be great if you could see what you can do about these failures. I'll also try to make sense of the failures while I'm reviewing these changes. I'll update you here if/when I find what's causing them. Thanks again! |

|

Is each test suite running in parallel? It could be possible that the next test starts even though the previous response was not closed. Its probably safest if we wrap this new behavior in a config flag to enable it, that way it can be opt-in |

|

The test suites each run in their own container. It looks like there are two categories of failure here. The first are caused by a breaking change in sprockets v4.0.0 -- we've dealt with those in a separate branch that will be integrated soon, and we can ignore them for the purposes of this PR. There are also a bunch of failures we cannot ignore. It looks like this change causes many failures in our unit test suite running in Rails 3.0, 3.1, 3.2, and 4.0. To run these tests yourself, run this command from the main rpm directory: Beyond that, I'd like to know a bit more about the problem that this PR solves. Can you include a screenshot of a transaction trace without the changes in this PR? Thanks! |

|

@sdemjanenko, it's been a while since I've heard from you. Before we can review or merge this PR, I'd like to understand your use case a little more. Let me know if you'd like us to proceed. Otherwise, I'll close this PR in about two days. Thanks! |

|

Hey Justin I spent a little time getting a rails demo app set up for this.

The gem I was trying to set up is turbostreamer but unfortunately it

doesn't work with rails 6 so I need to recreate the demo app as rails 5 or

just rack.

I am out on volunteer time off this week. Could you give me another week to

get you a demo?

The demo will be a unicorn app which streams to a client socket. This

streaming will be implemented as http 1.1 transfer chunked. The chunks will

be lazy-generated (turbostreamer supports this or you could render an

enumerator). A good example of an enumerator rendering is shown in the

ReportController in: "Stream CSV Files In Rails Because You Can” by Noel

Rappin https://link.medium.com/ZNbrTYgoM1

Thanks in advance

…On Wed, Nov 20, 2019, 11:11 Justin Foote ***@***.***> wrote:

@sdemjanenko <https://github.com/sdemjanenko>, it's been a while since

I've heard from you. Before we can review or merge this PR, I'd like to

understand your use case a little more.

Let me know if you'd like us to proceed. Otherwise, I'll close this PR in

about two days.

Thanks!

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

<#302>,

or unsubscribe

<https://github.com/notifications/unsubscribe-auth/AABZHRL6MYTNQBEJNAJCUU3QUVVSVANCNFSM4JFJX47A>

.

|

|

This sounds great! And we're happy to keep this PR open as long as you need. We just don't want to keep PRs around that have been forgotten about. Thanks for all the effort you're putting into this! |

This is the proposed patch in github.com/newrelic/newrelic-ruby-agent/pull/302. To run this patch set `NEWRELIC_PATCH=true` when calling `rails server`.

|

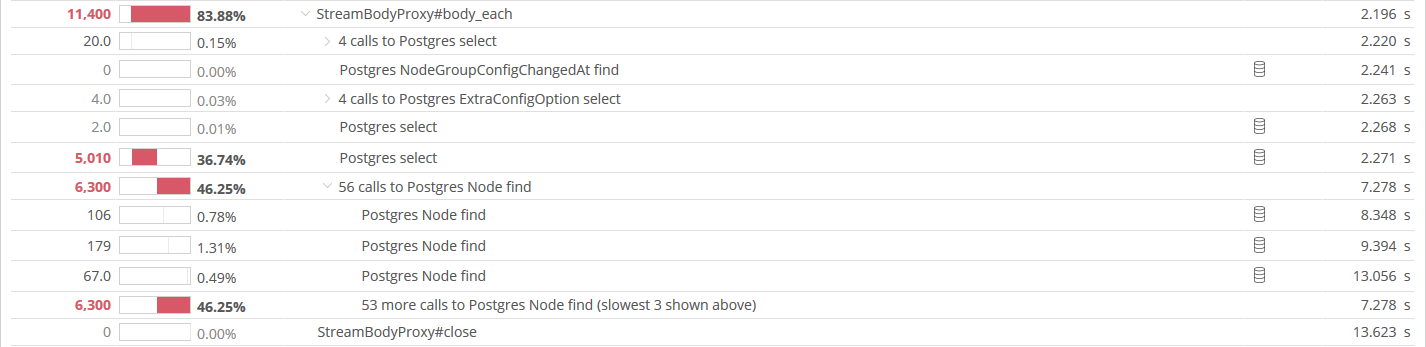

Here is a demo app: https://github.com/sdemjanenko/newrelic_rpm_sample_streaming_app You will need to configure Newrelic to report to your own application. Once that is done if you run Stop rails and restart it with |

|

Hi @sdemjanenko, Thanks for the repro! It really helps us see the before-and-after impact of this work. Very nice. We will resume reviewing your PR and will be in touch about its possible inclusion in an upcoming version of the agent. In the meantime, I encourage you to look into the test failures Justin mentioned:

Again, thanks, and we look forward to continuing the conversation here! |

|

Could I get an overview of how the testcases end up running? Specifically I am looking at |

|

Hi, @sdemjanenko. Upon closer inspection, it looks like the tests in question have to do with some very specific New Relic implementation details, so we will handle looking into those failures as well as we evaluate your PR. No action is needed on your part at this time. We'll be in touch. Thanks again for all your work! |

|

As I was looking through the code and tests I noticed that the middleware instrumentation may not get set up (if Does that analysis seem reasonable? |

|

@sdemjanenko, first let me just say I really enjoyed your medium blog post on the topic of streaming chunks of data with an Enumerator. (Oops! I just re-read that article and realized it was written by Noel We reviewed your submission at length internally and with respect to your question about patching agent_hooks middleware. What we noticed in our review is that the new additions to the new functionality added to This highly suggests that we can separate this new functionality into its own Rack app. If we can successfully separate this new functionality into it's own Rack App, then we solve three major concerns at once:

Some initial thoughts into how this may shape up:

These changes would make your PR much more modular and testable while better fitting into the overall design of the New Relic Agent. Additionally, we could introduce a configuration flag to opt-in/opt-out of this level of tracing. Would you be willing to take on this refactoring effort? If so, we are more than happy to guide you through it and support your effort along the way. |

|

@mwlang I was thinking the same thing. It definitely seems cleaner to have a clear middleware layer which controls this behavior. I just made a first pass at this middleware: sdemjanenko@28383db One thing to note, it is important that this middleware controls the outer-most segment. In my testing I had noticed strange traces where an inner segment is not marked finished before the outer-most transaction is marked finished. |

|

@sdemjanenko I'm starting to look at the implementation you put together. Regarding your comment about inserting at top of middleware stack, would inserting the same way browser monitoring is, but just before browser monitoring accomplish the desired processing order? I think the prerequisite here is simply that this new streaming tracer is the first NR middleware encountered in the stack to ensure the inner segment is closed before the outer transaction. See in-line comments on your commit. |

|

@sdemjanenko, it's been a while since I've heard from you. I'd love to hear your thoughts on our last exchange and if we can help with anything. |

|

|

|

Hi @sdemjanenko, we wanted to check in with you again on this pull request. We really appreciate the work you've done so far. Can we do anything to help you bring it to completion (i.e. addressing our inline comments on your most recent commit)? If you don't have the time to work on this at the moment, we can also mark it as "help wanted" to encourage contributions from others. Please let us know your preference. Again, thank you so much for all you've done so far, and we're looking forward to hearing from you. |

|

Hello @sdemjanenko |

When tracing a request, Newrelic should not stop the transaction if

the response is lazy-evaluated (e.g. Streaming). Rack's Body response

can render streaming responses by wiring an Enumerator into

@body.The server that writes to the client then calls response.each which

iterates over each part of the body (parts can be lazy-generated).

We are interested in tracing this code because DB queries, http requests

or other business logic may be running in this Enumerator.